The completion of the most recent offering of the 10x10 ("the by ten") course at this year's American Medical Informatics Association (AMIA) 2014 Annual Symposium marks ten years of existence of the course. Looking back to its inauspicious start in the fall of 2005, the 10x10 program has been a great success and remains a significant part of my work life. It has not only cemented for my passion and love for teaching, but also gives me great motivation to keep up-to-date broadly across the entire informatics field.

For those who are unfamiliar with the 10x10 course, it is a repackaging of the introductory course in the OHSU Biomedical Informatics Graduate Program. This is the course taken by all students who enter the clinical informatics track of the OHSU program and aims to provide a broad overview of the field and its language. The course has no prerequisites, and does not assume any prior knowledge of healthcare, computing, or other topics. The course has ten units of material, with the graduate course spread over ten weeks and the 10x10 version decompressed to 14 weeks. The 10x10 course also features an in-person session at the end to bring participants together to interact and present project work. (The in-person session is optional for those who might have a hardship in traveling to it.)

The AMIA 10x10 program was launched in 2005 when AMIA wanted to explore online educational offerings. When the cost for development of new materials was found to be prohibitive, I presented a proposal to the AMIA Board of Directors for adapting the introductory online course I had been teaching at Oregon Health & Science University (OHSU) since 1999. Since then-President of AMIA Dr. Charles Safran was calling for one physician and one nurse in each of the 6000 US hospitals to be trained in informatics, I proposed the name 10x10, standing for "10,000 trained by 2010." We all agreed that the course would be mutually non-exclusive, i.e., other universities could offer 10x10 courses while OHSU could continue to employ the course content in other venues.

The OHSU course has, however, been the flagship course of the 10x10 program, and by the end of 2010, a total of 999 had completed it. We did not reach anywhere near that vaunted number of 10,000 by 2010, although probably could have had that many people come forward, since distance learning is very scalable. After 2010 the course continued to be popular and in demand, so we continued to offer "10x10" and have done so to the present time.

This year now marks the tenth year that the course has been offered, and some 1837 people have completed the OHSU offering of 10x10. This includes not only general offerings with AMIA, but those delivered to various partners, including the American College of Emergency Physicians, the Academy of Nutrition and Dietetics, the Mayo Clinic, the Centers for Disease Control and Prevention, the New York State Academy of Family Physicians, and others. The course has also had international appeal as well, with it being translated and then adapted to Latin America by colleagues at Hospital Italiano of Buenos Aires in Argentina as well as being offered in its English version, with some local content and perspective added, in collaboration with Gateway Consulting in Singapore. Additional international offerings have been sponsored by King Saud University of Saudi Arabia and the Israeli Ministry of Health.

All told, the OHSU offering of the 10x10 program has accounted for 76% of the 2406 people who completed various other 10x10 courses. The chart below shows the distribution of the institutions offering English versions of the course.

The 10x10 course has also been good for our informatics educational program at OHSU. As the course is a replication of our introductory course in our graduate program (BMI 510 - Introduction to Biomedical and Health Informatics), those completing the OHSU 10x10 course can optionally take the final exam for BMI 510 and then be eligible for graduate credit at OHSU (if they are eligible for graduate study, i.e., have a bachelor's degree). About half of the people completing the course have taken and passed the final exam, with about half of them (25% of total) enrolling in either our Graduate Certificate or Master of Biomedical Informatics program. Because our graduate program has a "building block" structure, where what is done at lower levels can be applied upward, we have had one individual who even started in the 10x10 course and progressed all the way to obtain a Doctor of Philosophy (PhD) from our program.

As I said at the end of the 2010, the 10x10 program will continue as long as there is interest from individuals who want to take it. Given the continued need for individuals with expertise in informatics, along with rewarding careers for them to pursue in the field, I suspect the course will continue for a long time.

Sunday, November 30, 2014

Friday, November 21, 2014

The Year in Review of Biomedical and Health Informatics - 2014

At this year's American Medical Informatics Association (AMIA) 2014 Annual Symposium, I was honored to be asked, along with fellow Oregon Health & Science University (OHSU) faculty member Dr. Joan Ash, to deliver one of the annual Year in Review sessions.

This session was first delivered in 2006 by Dr. Dan Masys, who presented an annual review of the past year's research publications and major events each year. Over time, parts of the annual review were broken off and focused on specific topics. The first of these were the annual reviews in translational bioinformatics (Dr. Russ Altman) and clinical research informatics (Dr. Peter Embi, an OHSU alumnus), presented at the annual AMIA Joint Summits on Translational Science. This year additional topics were peeled off, such as Informatics in the Media Year in Review (Dr. Danny Sands) as well as Public and Global Health Informatics Year in Review (Dr. Brian Dixon, Dr. Jamie Pina, Dr. Janise Richards, Dr. Hadi Kharrazi, and OHSU alumnus Dr. Anne Turner).

This pretty much left clinical informatics as the major topic for Joan and I to cover. However, I had also noted that this separating out of specific aspects of informatics left no one covering the fundamentals of informatics, i.e., topics underlying and germane to all aspects of informatics. We also noted that qualitative and mixed methods research had also been historically underrepresented in these annual reviews. Therefore, Joan and I set the scope of our Year in Review session to clinical informatics and foundations of biomedical and health informatics. For research that was evaluative, Joan would cover qualitative and mixed methods studies, while I would cover studies using predominantly quantitative methods studies.

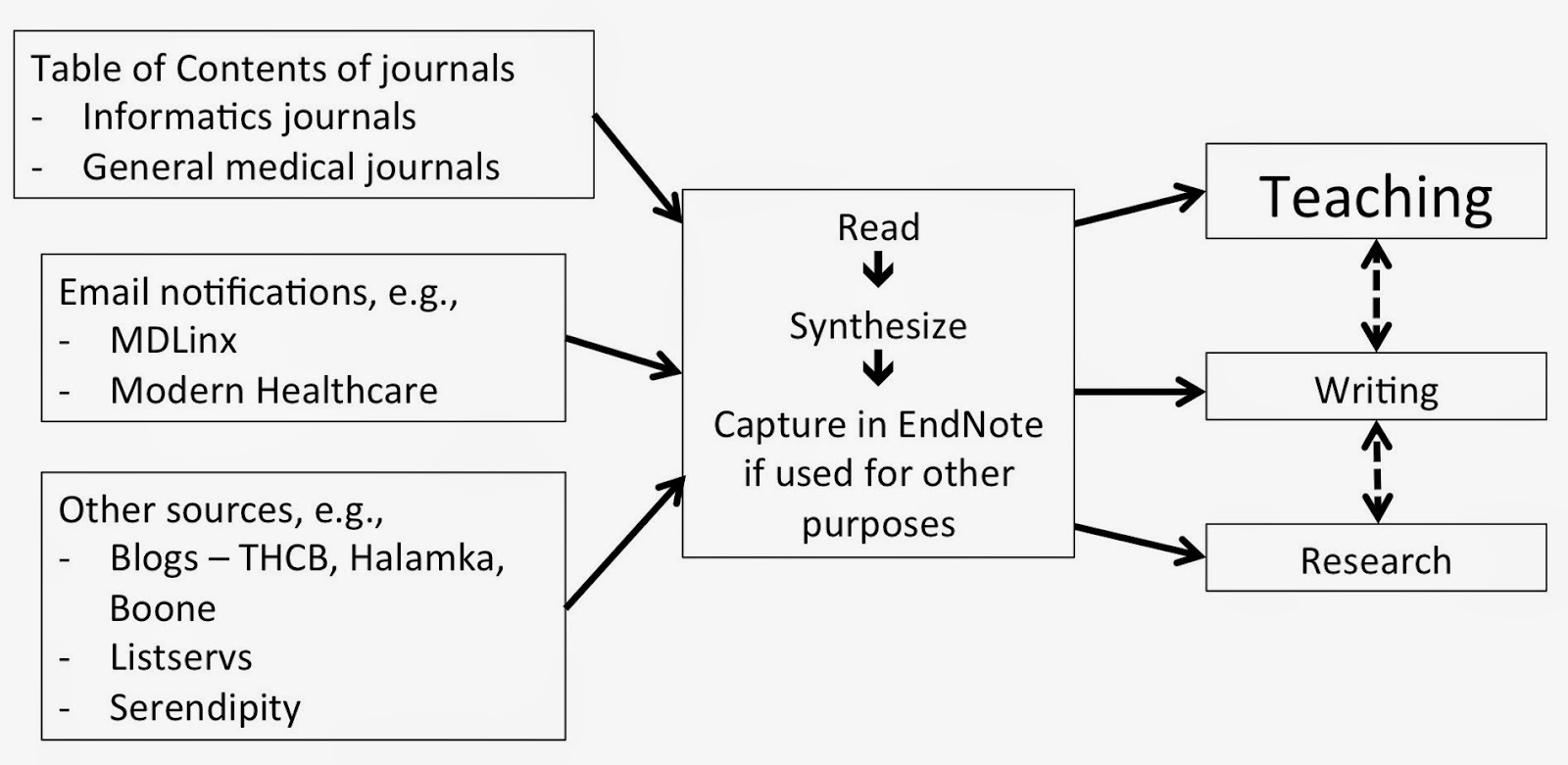

We also believed that while Dan's methods for gathering publications was sound, different approaches worked better for us. For myself in particular, I decided to plug the annual review process into my existing workflow of uncovering important science and events in the field, which I spend a good amount of time doing in order to keep my introductory (10x10 and OHSU) course up to date. I comprehensively scan the literature as well as the news on a continuous basis to keep my teaching materials (and knowledge!) up to date. I actually created a slide in the presentation to show my normal workflow "methods," which informed my review and is shown below.

Our first annual review was presented at the AMIA 2014 Annual Symposium on November 18, 2014. Continuing Dan's tradition, we created a Web page that has a description of our goals and methods, a link to our slides, and all of the articles cited in our presentation. We also kept the traditional time frame for the "year" in review, which was from October 1, 2013 to September 30, 2014. One additional feature of the session that we added was to offer up the last 15 minutes for attendees to make their own nominations for publications or events to be included.

Joan and I were pleased with how the session went, and we were gratified by the positive response from attendees. We are hopeful to be invited back to present the session again next year!

This session was first delivered in 2006 by Dr. Dan Masys, who presented an annual review of the past year's research publications and major events each year. Over time, parts of the annual review were broken off and focused on specific topics. The first of these were the annual reviews in translational bioinformatics (Dr. Russ Altman) and clinical research informatics (Dr. Peter Embi, an OHSU alumnus), presented at the annual AMIA Joint Summits on Translational Science. This year additional topics were peeled off, such as Informatics in the Media Year in Review (Dr. Danny Sands) as well as Public and Global Health Informatics Year in Review (Dr. Brian Dixon, Dr. Jamie Pina, Dr. Janise Richards, Dr. Hadi Kharrazi, and OHSU alumnus Dr. Anne Turner).

This pretty much left clinical informatics as the major topic for Joan and I to cover. However, I had also noted that this separating out of specific aspects of informatics left no one covering the fundamentals of informatics, i.e., topics underlying and germane to all aspects of informatics. We also noted that qualitative and mixed methods research had also been historically underrepresented in these annual reviews. Therefore, Joan and I set the scope of our Year in Review session to clinical informatics and foundations of biomedical and health informatics. For research that was evaluative, Joan would cover qualitative and mixed methods studies, while I would cover studies using predominantly quantitative methods studies.

We also believed that while Dan's methods for gathering publications was sound, different approaches worked better for us. For myself in particular, I decided to plug the annual review process into my existing workflow of uncovering important science and events in the field, which I spend a good amount of time doing in order to keep my introductory (10x10 and OHSU) course up to date. I comprehensively scan the literature as well as the news on a continuous basis to keep my teaching materials (and knowledge!) up to date. I actually created a slide in the presentation to show my normal workflow "methods," which informed my review and is shown below.

Our first annual review was presented at the AMIA 2014 Annual Symposium on November 18, 2014. Continuing Dan's tradition, we created a Web page that has a description of our goals and methods, a link to our slides, and all of the articles cited in our presentation. We also kept the traditional time frame for the "year" in review, which was from October 1, 2013 to September 30, 2014. One additional feature of the session that we added was to offer up the last 15 minutes for attendees to make their own nominations for publications or events to be included.

Joan and I were pleased with how the session went, and we were gratified by the positive response from attendees. We are hopeful to be invited back to present the session again next year!

Saturday, November 15, 2014

Continued Concerns for Building Capacity for the Clinical Informatics Subspecialty - 2014 Update

The first couple years of the clinical informatics subspecialty have been a great success. Last year, about 450 physicians became board-certified after the first certification exam, with many aided by the American Medical Informatics Association (AMIA) Clinical Informatics Board Review Course (CIBRC) that I directed. This year, another cohort took the exam, with many helped by the CIBRC course again. In addition this year, the Accreditation Council for Graduate Medical Education (ACGME) released its initial accreditation guidelines, and four programs (including ours at Oregon Health & Science University [OHSU]) became accredited, with a number of other programs in the process of applying.

Despite these initial positive outcomes, I and others still have many concerns for how we will build appropriate capacity in the new subspecialty. In particular, many of us are concerned that the number of newly certified subspecialists will slow to a trickle after 2018, once the "grandfathering" pathway is no longer available and the only route to certification will be through a two-year, on-site, full-time clinical fellowship. Indeed, the singular bit of advice I give to any physician who is currently "practicing" clinical informatics is to do whatever they can to get certified prior to 2018. It will be much more difficult to become certified after that, since the only pathway will be an ACGME-accredited fellowship.

I previously raised concerns about these challenges in postings last year and the year before, and this one represents an update leading into the annual AMIA Symposium. Colleague Chris Longhurst, whose fellowship program was the first to achieve accreditation, has expressed similar concerns in interviews by CMIO Magazine and HISTalk.

Looking forward, I see four major problems for the subspecialty. I will address each of these and then (since I am a solutions-oriented person) propose what I believe would be a better approach to the subspecialty.

The subspecialty excludes many physicians who do not have a primary specialty

When the AMIA leadership starting development a proposal for professional recognition of physicians in clinical informatics around 2006, they were advised that creating a new primary specialty would be a lot more difficult to sell politically and instead to advised to propose a new subspecialty. This would be unique as a subspecialty of all medical specialties. I am sure that advice was correct, but we have unfortunately excluded those who never obtained a primary clinical specialty or whose specialty certification has lapsed. These individuals can still be highly capable informaticians, and in fact many are. The alternate AMIA Advanced Interprofessional Informatics Certification being developed may serve these physicians, but it would be much better as a profession to have all physicians under a single certification.

The clinical fellowship model will exclude from training the many physicians who gravitate into informatics well after their initial training

The majority of physicians who work in clinical informatics did not start their careers in the field. Many gravitated into the field long after they completed their initial medical training, took a job, and established geographic roots and families. The distance learning graduate programs offered by OHSU and other universities have been a boon to these individuals, as they can train in informatics while keeping their current jobs and not needing to uproot their families. Many of these individuals have great experience, and many passed the initial board exam. They are clearly capable.

After 2018, the "grandfathering" pathway will no longer be an option, and the only way to achieve board certification will be via a full-time two-year fellowship. It is interesting to note the recent advice I heard expressed by Dr. Robert Wah, President of the American Medical Association. He noted that many physicians have moved beyond direct clinical care to have an impact in medicine in other ways. But he advised that every physician should establish their clinical career first and then move on to other pursuits. This too is at odds with the clinical fellowship model that almost by necessity must come during one's primary medical training.

In a similar vein, a number of colleagues who are subspecialists in other fields of medicine express concern that a clinical informatics subspecialty fellowship would add an additional two years of training on to the already lengthy training required of most highly specialized physicians. As much as I am an advocate of formal informatics training, I also recognize, and would even encourage, such training being integrated with other clinical training, especially in subspecialties.

The clinical fellowship model also is not the most appropriate way to train clinical informaticians

Even for those who are able to complete clinical informatics fellowships, the classic clinical fellowship training model is problematic, as those of us applying to ACGME have learned. I likened this process a few months ago to fitting square pegs into round holes.

Clinical medicine is very well suited to episodic learning and hence rotations. A patient comes in, and their current presentation is a nice segue into learning about the diseases they have, the treatments they are being given, and the course of their disease(s). Even patients being followed longitudinally in a continuity clinic have episodes of care with the healthcare system that provide good learning.

But informatics is a different kind of topic. Informatics is not an activity that takes place in episodes. You can't really learn from episodic exposure to it. Good informatics projects, such as a clinical decision support implementation or a quality improvement initiative, take place over time. In fact, learning is compromised when you jump in and/or leave in the middle. Informatics projects are also carried out by teams of people with diverse skills with whom the informatician must work. I would assert that better learning takes place when the informatics trainee encounters specific informatics issues (standards, security, change management, etc.) in the context of long-term projects.

There are other concerns that have arisen about various aspects of the ACGME accreditation progress. One program was declined accreditation because a program director was not in the same primary specialty as the Residency Review Committee (RRC), despite the fact that clinical informatics is supposed to span all specialties. ACGME also requires any fellowship program, no matter how small, to have 2.0 FTE of combined director and faculty time. This may make sense in a clinical setting where faculty are simultaneously engaged in care of the same patient, but does not fit well when a trainee is working on single aspects of a larger project. Another ACGME requirement is for all faculty who teach to be named, and for those who are named to be board-certified clinical informaticians. This again does not make sense in the context of informatics being an activity with participants from many disciplines outside of medicine, some even outside of healthcare. Finally, ACGME requires fellows to be paid. This is easier to do when fellows are actively involved in the clinical operations of the hospital. Even if these trainees cannot bill, they do make it easier for attending physicians and hospitals to bill.

The funding model for fellowships creates challenges for their sustainability

A final challenge for clinical informatics fellowships is their funding and sustainability. Most subspecialty training in the US is funded by academic hospitals, and part of the "grand bargain" of such training is that clinical trainees provide inexpensive labor, which "extends" the ability of their teachers to provide care. The various clinical units have incentive to do this because it increases the ability of the units to provide and bill for services. Clinical informatics is different in that fellows will be unlikely to provide direct capacity benefit to academic clinical informatics departments. Our department at OHSU, for example, does not have operational clinical IT responsibilities.

Furthermore, these fellows will be doing their clinical practice in their primary specialties, and not their clinical informatics subspecialty. The primary specialties will include the full gamut of medical specialties such as internal medicine, radiology, pathology, and others. Even if fellows will be able to bill, it will be challenging within organizations for units to divide up the revenues.

Solutions

In last year's post I proposed a solution addressing last year's description of these problems, and what follows is an updated version. There are approaches that could be rigorous enough to ensure an equally if not more robust educational and training experience than the proposed fellowship model. It would no doubt test the boundaries of a tradition-bound organization like ACGME but could also show innovation reflective (and indeed required) of modern medical training generally.

A first solution is to provide a pathway for any physician to become certified in clinical informatics, whether having a primary board certification or not. Informatics as a subspecialty of any medical specialty is a contortion. I do not buy that one cannot be a successful clinical informatician without having a primary board certification. I and likely everyone else in the field know of too many counter-examples to that.

Moving on to specifics of training, last year I noted that there should be three basic activities of clinical informatics subspecialty trainees:

Next, how would trainees get their practical hands-on project work? Again, many informatics programs, certainly ours, have developed mechanisms by which students can do internships or practicums in remote location through a combination of affiliation agreements, local mentoring, and remote supervision. While our program currently has students performing 3-6 months at a time of these, I see no reason why the practical experience could not be expanded to a year or longer. Strict guidelines for experience and both local and remote mentoring could be put in place to insure quality.

Lastly, what about clinical practice? As noted above, I disagree that this should even be a requirement. But if it were, requiring a trainee to perform a certain volume of clinical practice, while adhering to all appropriate requirements for licensure and maintenance of certification, should be more than adequate to insure practice in their primary specialty. Many informatics distance learning students are already maintaining their clinical practices to maintain their livelihood. Making clinical practice explicit, instead of as something requiring supervision, will also allow training to be more financially viable for the fellow. Any costs of tuition and practical work could easily be offset by clinical practice revenue.

There would need to be some sort of national infrastructure to set standards and monitor progress of clinical informatics trainees. There are any number of organizations that could perform this task, such as AMIA, and it could perhaps be a requirement of accreditation. Indeed, ACGME and the larger medical education community may learn from alternative approaches like this for training in other specialties. One major national concern these days is that number of residency positions for medical school graduates is not keeping up with the increases of medical school enrollment or, for that matter, the national need for physicians. It is possible that alternative approaches like this could expand the capacity of all medical specialties and subspecialties, and not just clinical informatics.

Despite these initial positive outcomes, I and others still have many concerns for how we will build appropriate capacity in the new subspecialty. In particular, many of us are concerned that the number of newly certified subspecialists will slow to a trickle after 2018, once the "grandfathering" pathway is no longer available and the only route to certification will be through a two-year, on-site, full-time clinical fellowship. Indeed, the singular bit of advice I give to any physician who is currently "practicing" clinical informatics is to do whatever they can to get certified prior to 2018. It will be much more difficult to become certified after that, since the only pathway will be an ACGME-accredited fellowship.

I previously raised concerns about these challenges in postings last year and the year before, and this one represents an update leading into the annual AMIA Symposium. Colleague Chris Longhurst, whose fellowship program was the first to achieve accreditation, has expressed similar concerns in interviews by CMIO Magazine and HISTalk.

Looking forward, I see four major problems for the subspecialty. I will address each of these and then (since I am a solutions-oriented person) propose what I believe would be a better approach to the subspecialty.

The subspecialty excludes many physicians who do not have a primary specialty

When the AMIA leadership starting development a proposal for professional recognition of physicians in clinical informatics around 2006, they were advised that creating a new primary specialty would be a lot more difficult to sell politically and instead to advised to propose a new subspecialty. This would be unique as a subspecialty of all medical specialties. I am sure that advice was correct, but we have unfortunately excluded those who never obtained a primary clinical specialty or whose specialty certification has lapsed. These individuals can still be highly capable informaticians, and in fact many are. The alternate AMIA Advanced Interprofessional Informatics Certification being developed may serve these physicians, but it would be much better as a profession to have all physicians under a single certification.

The clinical fellowship model will exclude from training the many physicians who gravitate into informatics well after their initial training

The majority of physicians who work in clinical informatics did not start their careers in the field. Many gravitated into the field long after they completed their initial medical training, took a job, and established geographic roots and families. The distance learning graduate programs offered by OHSU and other universities have been a boon to these individuals, as they can train in informatics while keeping their current jobs and not needing to uproot their families. Many of these individuals have great experience, and many passed the initial board exam. They are clearly capable.

After 2018, the "grandfathering" pathway will no longer be an option, and the only way to achieve board certification will be via a full-time two-year fellowship. It is interesting to note the recent advice I heard expressed by Dr. Robert Wah, President of the American Medical Association. He noted that many physicians have moved beyond direct clinical care to have an impact in medicine in other ways. But he advised that every physician should establish their clinical career first and then move on to other pursuits. This too is at odds with the clinical fellowship model that almost by necessity must come during one's primary medical training.

In a similar vein, a number of colleagues who are subspecialists in other fields of medicine express concern that a clinical informatics subspecialty fellowship would add an additional two years of training on to the already lengthy training required of most highly specialized physicians. As much as I am an advocate of formal informatics training, I also recognize, and would even encourage, such training being integrated with other clinical training, especially in subspecialties.

The clinical fellowship model also is not the most appropriate way to train clinical informaticians

Even for those who are able to complete clinical informatics fellowships, the classic clinical fellowship training model is problematic, as those of us applying to ACGME have learned. I likened this process a few months ago to fitting square pegs into round holes.

Clinical medicine is very well suited to episodic learning and hence rotations. A patient comes in, and their current presentation is a nice segue into learning about the diseases they have, the treatments they are being given, and the course of their disease(s). Even patients being followed longitudinally in a continuity clinic have episodes of care with the healthcare system that provide good learning.

But informatics is a different kind of topic. Informatics is not an activity that takes place in episodes. You can't really learn from episodic exposure to it. Good informatics projects, such as a clinical decision support implementation or a quality improvement initiative, take place over time. In fact, learning is compromised when you jump in and/or leave in the middle. Informatics projects are also carried out by teams of people with diverse skills with whom the informatician must work. I would assert that better learning takes place when the informatics trainee encounters specific informatics issues (standards, security, change management, etc.) in the context of long-term projects.

There are other concerns that have arisen about various aspects of the ACGME accreditation progress. One program was declined accreditation because a program director was not in the same primary specialty as the Residency Review Committee (RRC), despite the fact that clinical informatics is supposed to span all specialties. ACGME also requires any fellowship program, no matter how small, to have 2.0 FTE of combined director and faculty time. This may make sense in a clinical setting where faculty are simultaneously engaged in care of the same patient, but does not fit well when a trainee is working on single aspects of a larger project. Another ACGME requirement is for all faculty who teach to be named, and for those who are named to be board-certified clinical informaticians. This again does not make sense in the context of informatics being an activity with participants from many disciplines outside of medicine, some even outside of healthcare. Finally, ACGME requires fellows to be paid. This is easier to do when fellows are actively involved in the clinical operations of the hospital. Even if these trainees cannot bill, they do make it easier for attending physicians and hospitals to bill.

The funding model for fellowships creates challenges for their sustainability

A final challenge for clinical informatics fellowships is their funding and sustainability. Most subspecialty training in the US is funded by academic hospitals, and part of the "grand bargain" of such training is that clinical trainees provide inexpensive labor, which "extends" the ability of their teachers to provide care. The various clinical units have incentive to do this because it increases the ability of the units to provide and bill for services. Clinical informatics is different in that fellows will be unlikely to provide direct capacity benefit to academic clinical informatics departments. Our department at OHSU, for example, does not have operational clinical IT responsibilities.

Furthermore, these fellows will be doing their clinical practice in their primary specialties, and not their clinical informatics subspecialty. The primary specialties will include the full gamut of medical specialties such as internal medicine, radiology, pathology, and others. Even if fellows will be able to bill, it will be challenging within organizations for units to divide up the revenues.

Solutions

In last year's post I proposed a solution addressing last year's description of these problems, and what follows is an updated version. There are approaches that could be rigorous enough to ensure an equally if not more robust educational and training experience than the proposed fellowship model. It would no doubt test the boundaries of a tradition-bound organization like ACGME but could also show innovation reflective (and indeed required) of modern medical training generally.

A first solution is to provide a pathway for any physician to become certified in clinical informatics, whether having a primary board certification or not. Informatics as a subspecialty of any medical specialty is a contortion. I do not buy that one cannot be a successful clinical informatician without having a primary board certification. I and likely everyone else in the field know of too many counter-examples to that.

Moving on to specifics of training, last year I noted that there should be three basic activities of clinical informatics subspecialty trainees:

- Clinical informatics education to master the core knowledge of the field

- Clinical informatics project work to gain skills and practical experience

- Clinical practice to maintain their skills in their primary medical specialty

Next, how would trainees get their practical hands-on project work? Again, many informatics programs, certainly ours, have developed mechanisms by which students can do internships or practicums in remote location through a combination of affiliation agreements, local mentoring, and remote supervision. While our program currently has students performing 3-6 months at a time of these, I see no reason why the practical experience could not be expanded to a year or longer. Strict guidelines for experience and both local and remote mentoring could be put in place to insure quality.

Lastly, what about clinical practice? As noted above, I disagree that this should even be a requirement. But if it were, requiring a trainee to perform a certain volume of clinical practice, while adhering to all appropriate requirements for licensure and maintenance of certification, should be more than adequate to insure practice in their primary specialty. Many informatics distance learning students are already maintaining their clinical practices to maintain their livelihood. Making clinical practice explicit, instead of as something requiring supervision, will also allow training to be more financially viable for the fellow. Any costs of tuition and practical work could easily be offset by clinical practice revenue.

There would need to be some sort of national infrastructure to set standards and monitor progress of clinical informatics trainees. There are any number of organizations that could perform this task, such as AMIA, and it could perhaps be a requirement of accreditation. Indeed, ACGME and the larger medical education community may learn from alternative approaches like this for training in other specialties. One major national concern these days is that number of residency positions for medical school graduates is not keeping up with the increases of medical school enrollment or, for that matter, the national need for physicians. It is possible that alternative approaches like this could expand the capacity of all medical specialties and subspecialties, and not just clinical informatics.

Wednesday, November 12, 2014

Ebola is a Reason for Implementing ICD-10, or is it? What is the Role of Coding in the Modern EHR Era?

The recent Ebola outbreak has been used to justify or advocate many things. Among them is further advocacy for the transition to the ICD-10 coding system. However, when discussing Ebola and coding, it also gives us a chance to pause and address some larger issues around coding in the modern era of the electronic health record (EHR).

Coding of medical records is a requirement for billing, i.e., diagnosis codes must be included on a claim to obtain reimbursement for services from an insurer, whether a private insurance company or the government. The coding system currently used in the United States is ICD-9-CM, but its replacement with ICD-10-CM has been mandated, although the deadline has been postponed three times over the last four years. This coding also potentially creates a vast source of data for research, surveillance, and other purposes. Indeed, there is a whole body of research based on such "claims data," with one of the arguments for its use being that what this data lacks in depth or completeness is made up for by its volume [1].

ICD-9-CM has many limitations as a coding system. Probably its biggest limitation is that many codes cover a whole swath of diseases. In addition, its "not otherwise specified" may change over time when one of the components of not being otherwise specified becomes specified.

What does this have to do with Ebola? In ICD-9-CM, Ebola is one of many diagnoses covered by the code, 078.89 - Other specified diseases due to viruses. There are about 35 viruses that map to this code, including some common ones such as coronavirus and rotavirus. ICD-10-CM, on the other hand, has a specific code, A98.4 - Ebola virus disease.

Does this provide justification for the move to ICD-10-CM? ICD-10-CM is clearly more detailed and granular, and in fact may be excessively granular. Another concern about implementing ICD-10-CM is the cost to physicians and hospitals, which are mostly unknown although estimates vary widely [2, 3]. There is no question that ICD-9-CM falls short and that ICD-10-CM does have a specific code in the case of Ebola. But is this itself a reason justifying the move to ICD-10-CM, or are there other ways to determine from a medical record whether a diagnosis has been made?

When controversial questions arise, I always find it useful to step back and ask some questions, such as what we are trying to accomplish and whether it is the best way for doing so? There is actually a body of scientific literature that has assessed the consistency and value of coding medical records. One systematic review of United Kingdom coding studies found that coding accuracy in the UK varied widely, with a mean accuracy of 80.3% for diagnoses and 84.2% for procedures [4]. The range of accuracy, however, was from 50.5-97.8%. Another systematic review looked at heart failure diagnoses in Canadian hospitals, finding both ICD-9 and ICD-10 coding to vary widely in sensitivity of actual diagnosis (29-89%) and kappa scores of inter-assigner agreement (0.39-0.84) [5]. A US-based systematic review of identifying heart failure with diagnostic coding data found positive predictive value to be reasonably high (87-100%) but sensitivity to be lower [6]. Studies of diagnosis codes for hypertension [7] and obesity [8] found low sensitivity but higher specificity.

While these studies show that coding data is imperfect, there was a time when the predominance of paper medical records meant there was no alternative to data that could be analyzed. However, we are now in an era of widespread EHR adoption, which means that there are other sources of data to document diagnoses, testing, and treatments. In the case of Ebola, we have many other possible sources of data, as described by the Centers for Disease Control.

While there is certainly no evidence that our entire medical record coding enterprise should be immediately abandoned, there is definitely a case to reassess its necessity and value in the modern EHR era. This is especially the case when we are using EHR data for so many other purposes [9]. As with many questions, dispassionate science and analysis is the best approach to providing us with answers.

References

1. Ferver, K, Burton, B, et al. (2009). The use of claims data in healthcare research. The Open Public Health Journal. 2: 11-24.

2. Hartley, C and Nachimson, S (2014). The Cost of Implementing ICD‐10 for Physician Practices – Updating the 2008 Nachimson Advisors Study. Baltimore, MD, Nachimson Advisors, LLC. http://www.ama-assn.org/resources/doc/washington/icd-10-costs-for-physician-practices-study.pdf.

3. Kravis, TC, Belley, S, et al. (2014). Cost of converting small physician offices to ICD-10 much lower than previously reported. Journal of AHIMA, http://journal.ahima.org/wp-content/uploads/Week-3_PDFpost.FINAL-Estimating-the-Cost-of-Conversion-to-ICD-10_-Nov-12.pdf.

4. Burns, EM, Rigby, E, et al. (2012). Systematic review of discharge coding accuracy. Journal of Public Health. 34: 138-148.

5. Quach, S, Blais, C, et al. (2010). Administrative data have high variation in validity for recording heart failure. Canadian Journal of Cardiology. 26: e306-e312.

6. Saczynski, JS, Andrade, SE, et al. (2012). A systematic review of validated methods for identifying heart failure using administrative data. Pharmacoepidemiology and Drug Safety. 21: 129-140.

7. Tessier-Sherman, B, Galusha, D, et al. (2013). Further validation that claims data are a useful tool for epidemiologic research on hypertension. BMC Public Health. 13: 51. http://www.biomedcentral.com/1471-2458/13/51.

8. Lloyd, JT, Blackwell, SA, et al. (2014). Validity of a claims-based diagnosis of obesity among medicare beneficiaries. Evaluation and the Health Professions. Epub ahead of print.

9. Hersh, WR (2014). Healthcare Data Analytics. In Health Informatics: Practical Guide for Healthcare and Information Technology Professionals, Sixth Edition. R. Hoyt and A. Yoshihashi. Pensacola, FL, Lulu.com: 62-75.

Coding of medical records is a requirement for billing, i.e., diagnosis codes must be included on a claim to obtain reimbursement for services from an insurer, whether a private insurance company or the government. The coding system currently used in the United States is ICD-9-CM, but its replacement with ICD-10-CM has been mandated, although the deadline has been postponed three times over the last four years. This coding also potentially creates a vast source of data for research, surveillance, and other purposes. Indeed, there is a whole body of research based on such "claims data," with one of the arguments for its use being that what this data lacks in depth or completeness is made up for by its volume [1].

ICD-9-CM has many limitations as a coding system. Probably its biggest limitation is that many codes cover a whole swath of diseases. In addition, its "not otherwise specified" may change over time when one of the components of not being otherwise specified becomes specified.

What does this have to do with Ebola? In ICD-9-CM, Ebola is one of many diagnoses covered by the code, 078.89 - Other specified diseases due to viruses. There are about 35 viruses that map to this code, including some common ones such as coronavirus and rotavirus. ICD-10-CM, on the other hand, has a specific code, A98.4 - Ebola virus disease.

Does this provide justification for the move to ICD-10-CM? ICD-10-CM is clearly more detailed and granular, and in fact may be excessively granular. Another concern about implementing ICD-10-CM is the cost to physicians and hospitals, which are mostly unknown although estimates vary widely [2, 3]. There is no question that ICD-9-CM falls short and that ICD-10-CM does have a specific code in the case of Ebola. But is this itself a reason justifying the move to ICD-10-CM, or are there other ways to determine from a medical record whether a diagnosis has been made?

When controversial questions arise, I always find it useful to step back and ask some questions, such as what we are trying to accomplish and whether it is the best way for doing so? There is actually a body of scientific literature that has assessed the consistency and value of coding medical records. One systematic review of United Kingdom coding studies found that coding accuracy in the UK varied widely, with a mean accuracy of 80.3% for diagnoses and 84.2% for procedures [4]. The range of accuracy, however, was from 50.5-97.8%. Another systematic review looked at heart failure diagnoses in Canadian hospitals, finding both ICD-9 and ICD-10 coding to vary widely in sensitivity of actual diagnosis (29-89%) and kappa scores of inter-assigner agreement (0.39-0.84) [5]. A US-based systematic review of identifying heart failure with diagnostic coding data found positive predictive value to be reasonably high (87-100%) but sensitivity to be lower [6]. Studies of diagnosis codes for hypertension [7] and obesity [8] found low sensitivity but higher specificity.

While these studies show that coding data is imperfect, there was a time when the predominance of paper medical records meant there was no alternative to data that could be analyzed. However, we are now in an era of widespread EHR adoption, which means that there are other sources of data to document diagnoses, testing, and treatments. In the case of Ebola, we have many other possible sources of data, as described by the Centers for Disease Control.

While there is certainly no evidence that our entire medical record coding enterprise should be immediately abandoned, there is definitely a case to reassess its necessity and value in the modern EHR era. This is especially the case when we are using EHR data for so many other purposes [9]. As with many questions, dispassionate science and analysis is the best approach to providing us with answers.

References

1. Ferver, K, Burton, B, et al. (2009). The use of claims data in healthcare research. The Open Public Health Journal. 2: 11-24.

2. Hartley, C and Nachimson, S (2014). The Cost of Implementing ICD‐10 for Physician Practices – Updating the 2008 Nachimson Advisors Study. Baltimore, MD, Nachimson Advisors, LLC. http://www.ama-assn.org/resources/doc/washington/icd-10-costs-for-physician-practices-study.pdf.

3. Kravis, TC, Belley, S, et al. (2014). Cost of converting small physician offices to ICD-10 much lower than previously reported. Journal of AHIMA, http://journal.ahima.org/wp-content/uploads/Week-3_PDFpost.FINAL-Estimating-the-Cost-of-Conversion-to-ICD-10_-Nov-12.pdf.

4. Burns, EM, Rigby, E, et al. (2012). Systematic review of discharge coding accuracy. Journal of Public Health. 34: 138-148.

5. Quach, S, Blais, C, et al. (2010). Administrative data have high variation in validity for recording heart failure. Canadian Journal of Cardiology. 26: e306-e312.

6. Saczynski, JS, Andrade, SE, et al. (2012). A systematic review of validated methods for identifying heart failure using administrative data. Pharmacoepidemiology and Drug Safety. 21: 129-140.

7. Tessier-Sherman, B, Galusha, D, et al. (2013). Further validation that claims data are a useful tool for epidemiologic research on hypertension. BMC Public Health. 13: 51. http://www.biomedcentral.com/1471-2458/13/51.

8. Lloyd, JT, Blackwell, SA, et al. (2014). Validity of a claims-based diagnosis of obesity among medicare beneficiaries. Evaluation and the Health Professions. Epub ahead of print.

9. Hersh, WR (2014). Healthcare Data Analytics. In Health Informatics: Practical Guide for Healthcare and Information Technology Professionals, Sixth Edition. R. Hoyt and A. Yoshihashi. Pensacola, FL, Lulu.com: 62-75.

Wednesday, November 5, 2014

Two Recent Research Briefs Reiterate the Need for Clinical Decision Support

One of the seminal papers in informatics was published in 1978, when Octo Barnett and colleagues demonstrated that while computer-based feedback could positively impact physician decision-making, that impact went away when the feedback was removed. This has always been a rationale for clinical decision support (CDS), which helps clinicians because it reminds them to do the right thing, and that does not impart learning.

Two recent research briefs demonstrate how challenging is the task of getting physicians to be appropriate stewards of antibiotics and have implications for CDS. Antibiotics were one of the miracles of 20th century medicine, leading to substantial ability to fight infection. They are still an important armamentarium of medicine, but their value is threatened by growing resistance of organisms [2].

One research brief finds that the likelihood of antibiotic prescribing becomes higher as day goes on, which the researchers call "decision fatigue" [3]. Another brief shows that implementation of a physician audit and feedback program resulted in reducing inappropriate antibiotic prescribing, but that removal of the program resulted in a return toward baseline prescribing habits [4]. This finding has been found in other similar programs [5].

Practicing medicine is a complex task. Although physicians have always been assumed to maintain the entire knowledge base in their heads, decades of informatics-related research has shown otherwise. Of course, the way we implement CDS is imperfect, often providing advice that physicians do not need [6]. A big challenge going forward will be to optimize the signal vs. noise and determine the best ways to deliver that signal.

References

1. Barnett, GO, Winickoff, R, et al. (1978). Quality assurance through automated monitoring and concurrent feedback using a computer-based medical information system. Medical Care. 16: 962-970.

2. Anonymous (2013). Antibiotic Resistance Threats in the United States, 2013. Atlanta, GA, Centers for Disease Control and Prevention.

3. Linder, JA, Doctor, JN, et al. (2014). Time of day and the decision to prescribe antibiotics. JAMA Internal Medicine. Epub ahead of print.

4. Gerber, JS, Prasad, PA, et al. (2014). Durability of benefits of an outpatient antimicrobial stewardship intervention after discontinuation of audit and feedback. Journal of the American Medical Association. Epub ahead of print.

5. Arnold, SR and Straus, SE (2005). Interventions to improve antibiotic prescribing practices in ambulatory care. Cochrane Database of Systematic Reviews. 2005(4): CD003539.

6. Nanji, KC, Slight, SP, et al. (2014). Overrides of medication-related clinical decision support alerts in outpatients. Journal of the American Medical Informatics Association. 21: 487-491.

Two recent research briefs demonstrate how challenging is the task of getting physicians to be appropriate stewards of antibiotics and have implications for CDS. Antibiotics were one of the miracles of 20th century medicine, leading to substantial ability to fight infection. They are still an important armamentarium of medicine, but their value is threatened by growing resistance of organisms [2].

One research brief finds that the likelihood of antibiotic prescribing becomes higher as day goes on, which the researchers call "decision fatigue" [3]. Another brief shows that implementation of a physician audit and feedback program resulted in reducing inappropriate antibiotic prescribing, but that removal of the program resulted in a return toward baseline prescribing habits [4]. This finding has been found in other similar programs [5].

Practicing medicine is a complex task. Although physicians have always been assumed to maintain the entire knowledge base in their heads, decades of informatics-related research has shown otherwise. Of course, the way we implement CDS is imperfect, often providing advice that physicians do not need [6]. A big challenge going forward will be to optimize the signal vs. noise and determine the best ways to deliver that signal.

References

1. Barnett, GO, Winickoff, R, et al. (1978). Quality assurance through automated monitoring and concurrent feedback using a computer-based medical information system. Medical Care. 16: 962-970.

2. Anonymous (2013). Antibiotic Resistance Threats in the United States, 2013. Atlanta, GA, Centers for Disease Control and Prevention.

3. Linder, JA, Doctor, JN, et al. (2014). Time of day and the decision to prescribe antibiotics. JAMA Internal Medicine. Epub ahead of print.

4. Gerber, JS, Prasad, PA, et al. (2014). Durability of benefits of an outpatient antimicrobial stewardship intervention after discontinuation of audit and feedback. Journal of the American Medical Association. Epub ahead of print.

5. Arnold, SR and Straus, SE (2005). Interventions to improve antibiotic prescribing practices in ambulatory care. Cochrane Database of Systematic Reviews. 2005(4): CD003539.

6. Nanji, KC, Slight, SP, et al. (2014). Overrides of medication-related clinical decision support alerts in outpatients. Journal of the American Medical Informatics Association. 21: 487-491.

Subscribe to:

Posts (Atom)