It has become a tradition for me in this blog to post some reflections in the last posting of each year. This year is no different, and this posting is the end of 2014 installment.

Each year there has been a theme to my annual reflections. As the start of this blog was very much tied to the Health Information Technology for Clinical and Economic Health (HITECH) Act, the theme of 2009 concerned the deteriorating economy and its impact on the Oregon Health & Science University (OHSU) informatics program, the American Recovery and Reinvestment Act (ARRA), and the HITECH Act within ARRA. In 2010, I focused on the rolling out of the HITECH Act, especially the workforce development grants that were to become a major part of my work life in the following years. In 2011, I described the implementation of our HITECH workforce grants. By 2012, the beginning of the end for the HITECH funding was at hand, while in 2013, I described the transition from HITECH funding and a number of new developments, including the Informatics Discovery Lab (IDL) at OHSU and the rollout of the clinical informatics subspecialty.

What is the theme for 2014? One thing for certain is that work and life have gone on without HITECH. There were many great new accomplishments for the myself and the OHSU informatics program this past year, such as achieving Accreditation Council for Graduate Medical Education (ACGME) accreditation for our new clinical informatics fellowship that will be launched in 2015, new grants from the National Institutes of Health (NIH) Big Data to Knowledge (BD2K) program, and a new focus on competencies for medical (and other health professional) students in clinical informatics.

Despite the grants of HITECH becoming a distant memory, the impact of the HITECH Act on the informatics field cannot be understated. Of course the meaningful use program is still moving along, even if Stage 2 has been daunting and the prospect of penalties for not meeting meaningful use become a possible reality. But the informatics world is truly a different place now than before the HITECH Act. The road has been rocky, but EHR adoption has become near-universal in US hospitals and very substantial in physician offices. The fact that we are now lamenting about the problems of data and its lack of interoperability demonstrates progress in our lamenting less than a decade ago about healthcare being too paper-based. Much has been written about HITECH, often with a tinge (sometimes more) of politics thrown in. My thoughts resonate most with those who view HITECH in the context of its origins and acknowledge its success and limitations, such as Robert Wachter and John Halamka.

What lies ahead for 2015? Certainly the work described above that we have undertaken in 2014 will continue to play an important role. And like in all years, indeed in my whole career, there will be opportunities that emerge out of nowhere and turn out to be major activities.

Wednesday, December 31, 2014

Friday, December 12, 2014

Education in Informatics: Distinct Yet Integrative

One of the challenges we face in informatics education is how to call out its knowledge, skills, and competencies in the larger context of health and biomedicine. In other words, how do teach its important contributions while recognizing informatics does not exist in a solitary vacuum?

I see this at all levels of education in which I am involved, from that of medical and other health professional students to those training for professional careers in informatics.

One example of this is seen in medical student education. The importance of informatics in the training of physicians is finally being seen as important, yet the challenge is how to integrate appropriate informatics education into an environment where the evolution of the curriculum has been away from discrete courses to integration of all topics, typically organized into blocks and sometimes further divided into cases (i.e., case-based learning). Just as medical education no longer has standalone courses in biochemistry, pathology, physical examination and so forth, we should not aspire to have any sort of standalone informatics course either. Not only is informatics best learned in the context of solving real problems in clinical medicine, it also needs to be seen as integrated with the other subjects being learned.

The same applies to other healthcare professions. We must find ways to make informatics knowledge, skills, and competencies important, yet also integrated with their primary role as deliverers of healthcare.

Even for those training to work in informatics professionally, it is still important to understand its context. Some may be informatics professionals in clinical settings, public health settings, research settings, and even consumer-focused settings. The skilled informatician must know how to add value to those settings by best applying informatics.

This issue also plays out in one of the concerns I have for clinical informatics fellowships. As I have written before, I am troubled the idea of a standalone, one-size-fits-all, two-years-on-the-ground fellowship that is required by ACGME rules. Two additional years of fellowship is a lot to ask of physicians who do not start meaningful earning until into their 30s or later. Several of my clinical faculty colleagues at OHSU have asked why fellows cannot train simultaneously in informatics and another discipline. Not only do I not object to such integrated training, I actually believe it would be a great boon for an oncologist, cardiologist, surgeon, etc. to simultaneously train in informatics along with his or her other discipline, especially if they plan to pursue informatics in the context of that discipline.

But all this integration of informatics aside, I still strongly assert the title of this posting, which is that informatics should be distinct with its knowledge, skills, and competencies. However, its training and practice should be appropriately integrated with other health, clinical, and biomedical aspects of where it is being applied.

I see this at all levels of education in which I am involved, from that of medical and other health professional students to those training for professional careers in informatics.

One example of this is seen in medical student education. The importance of informatics in the training of physicians is finally being seen as important, yet the challenge is how to integrate appropriate informatics education into an environment where the evolution of the curriculum has been away from discrete courses to integration of all topics, typically organized into blocks and sometimes further divided into cases (i.e., case-based learning). Just as medical education no longer has standalone courses in biochemistry, pathology, physical examination and so forth, we should not aspire to have any sort of standalone informatics course either. Not only is informatics best learned in the context of solving real problems in clinical medicine, it also needs to be seen as integrated with the other subjects being learned.

The same applies to other healthcare professions. We must find ways to make informatics knowledge, skills, and competencies important, yet also integrated with their primary role as deliverers of healthcare.

Even for those training to work in informatics professionally, it is still important to understand its context. Some may be informatics professionals in clinical settings, public health settings, research settings, and even consumer-focused settings. The skilled informatician must know how to add value to those settings by best applying informatics.

This issue also plays out in one of the concerns I have for clinical informatics fellowships. As I have written before, I am troubled the idea of a standalone, one-size-fits-all, two-years-on-the-ground fellowship that is required by ACGME rules. Two additional years of fellowship is a lot to ask of physicians who do not start meaningful earning until into their 30s or later. Several of my clinical faculty colleagues at OHSU have asked why fellows cannot train simultaneously in informatics and another discipline. Not only do I not object to such integrated training, I actually believe it would be a great boon for an oncologist, cardiologist, surgeon, etc. to simultaneously train in informatics along with his or her other discipline, especially if they plan to pursue informatics in the context of that discipline.

But all this integration of informatics aside, I still strongly assert the title of this posting, which is that informatics should be distinct with its knowledge, skills, and competencies. However, its training and practice should be appropriately integrated with other health, clinical, and biomedical aspects of where it is being applied.

Wednesday, December 10, 2014

Accolades for the Informatics Professor - Fall, 2014 Update

As always, I am pleased to share periodically with readers the various accolades and mentions that colleagues, projects, and I at Oregon Health & Science University (OHSU) have received in recent months. This posting covers the mentions in the latter half of 2014.

In the late summer was a mention of my role in the American Medical Association (AMA) Accelerating Change in Medical Education Program of grants to medical schools to advance change in medical education. The OHSU grant has a component of informatics, with a focus on teaching 21st century physicians about data that they will use to facilitate their practices and others will use to assess the quality of care they deliver. One article focused on our development of competencies in clinical informatics for medical students, while the other described how we are implementing them in our AMA grant project.

OHSU also received a mention in a Web page purporting to rank the Top 25 "healthcare informatics" programs by "affordability". I am not sure exactly how they get their cost figures, but the page does accurately describe our program (number 16 on their list).

I received some other mentions concerning the new clinical informatics subspecialty, one in an article just before this year's board exam as well as in an interview with Stanford Program Director, Dr. Chris Longhurst.

Of course, the new subspecialty is one of many changes that informatics education has undergone recently, as noted both in an article I wrote as well as in one where I was interviewed.

I gave a number of talks that were recorded this fall, including my kick-off of our weekly OHSU informatics conference series as well as a talk about our Informatics Discovery Lab at the 2nd Annual Ignite Health event in Portland. The latter has an interesting format of five minutes to talk with slides that automatically advance every 15 seconds (for a total of 20 slides). The talk on the IDL led to my being invited to moderate a panel on business opportunities in health information technology in Portland.

There was also some press around the new National Institutes of Health (NIH) Big Data to Knowledge (BD2K) grants we received. Related to Big Data, another magazine called out my blog posting from last year that data scientists must also understand general research methodology.

Another news item mentioned a project I am likely to write about more in the future that concerns OHSU establishing collaborations in informatics and other areas in Thailand.

Finally, a few accolades came from events of the AMIA Annual Symposium 2014. One was getting my picture in HISTalk in a mention of the Fun Run at this year's symposium. I was also interviewed by a reporter who wanted to follow up on why I selected them items that I did for my top ten events of the year in my Year in Review talk. It was nice to be able to elaborate some and also watch the tweeting that followed.

It is gratifying to receive these accolades and of course I know have to keep doing innovative and important work to maintain them.

In the late summer was a mention of my role in the American Medical Association (AMA) Accelerating Change in Medical Education Program of grants to medical schools to advance change in medical education. The OHSU grant has a component of informatics, with a focus on teaching 21st century physicians about data that they will use to facilitate their practices and others will use to assess the quality of care they deliver. One article focused on our development of competencies in clinical informatics for medical students, while the other described how we are implementing them in our AMA grant project.

OHSU also received a mention in a Web page purporting to rank the Top 25 "healthcare informatics" programs by "affordability". I am not sure exactly how they get their cost figures, but the page does accurately describe our program (number 16 on their list).

I received some other mentions concerning the new clinical informatics subspecialty, one in an article just before this year's board exam as well as in an interview with Stanford Program Director, Dr. Chris Longhurst.

Of course, the new subspecialty is one of many changes that informatics education has undergone recently, as noted both in an article I wrote as well as in one where I was interviewed.

I gave a number of talks that were recorded this fall, including my kick-off of our weekly OHSU informatics conference series as well as a talk about our Informatics Discovery Lab at the 2nd Annual Ignite Health event in Portland. The latter has an interesting format of five minutes to talk with slides that automatically advance every 15 seconds (for a total of 20 slides). The talk on the IDL led to my being invited to moderate a panel on business opportunities in health information technology in Portland.

There was also some press around the new National Institutes of Health (NIH) Big Data to Knowledge (BD2K) grants we received. Related to Big Data, another magazine called out my blog posting from last year that data scientists must also understand general research methodology.

Another news item mentioned a project I am likely to write about more in the future that concerns OHSU establishing collaborations in informatics and other areas in Thailand.

Finally, a few accolades came from events of the AMIA Annual Symposium 2014. One was getting my picture in HISTalk in a mention of the Fun Run at this year's symposium. I was also interviewed by a reporter who wanted to follow up on why I selected them items that I did for my top ten events of the year in my Year in Review talk. It was nice to be able to elaborate some and also watch the tweeting that followed.

It is gratifying to receive these accolades and of course I know have to keep doing innovative and important work to maintain them.

Sunday, November 30, 2014

Ten Years of 10x10 ("Ten by Ten")

The completion of the most recent offering of the 10x10 ("the by ten") course at this year's American Medical Informatics Association (AMIA) 2014 Annual Symposium marks ten years of existence of the course. Looking back to its inauspicious start in the fall of 2005, the 10x10 program has been a great success and remains a significant part of my work life. It has not only cemented for my passion and love for teaching, but also gives me great motivation to keep up-to-date broadly across the entire informatics field.

For those who are unfamiliar with the 10x10 course, it is a repackaging of the introductory course in the OHSU Biomedical Informatics Graduate Program. This is the course taken by all students who enter the clinical informatics track of the OHSU program and aims to provide a broad overview of the field and its language. The course has no prerequisites, and does not assume any prior knowledge of healthcare, computing, or other topics. The course has ten units of material, with the graduate course spread over ten weeks and the 10x10 version decompressed to 14 weeks. The 10x10 course also features an in-person session at the end to bring participants together to interact and present project work. (The in-person session is optional for those who might have a hardship in traveling to it.)

The AMIA 10x10 program was launched in 2005 when AMIA wanted to explore online educational offerings. When the cost for development of new materials was found to be prohibitive, I presented a proposal to the AMIA Board of Directors for adapting the introductory online course I had been teaching at Oregon Health & Science University (OHSU) since 1999. Since then-President of AMIA Dr. Charles Safran was calling for one physician and one nurse in each of the 6000 US hospitals to be trained in informatics, I proposed the name 10x10, standing for "10,000 trained by 2010." We all agreed that the course would be mutually non-exclusive, i.e., other universities could offer 10x10 courses while OHSU could continue to employ the course content in other venues.

The OHSU course has, however, been the flagship course of the 10x10 program, and by the end of 2010, a total of 999 had completed it. We did not reach anywhere near that vaunted number of 10,000 by 2010, although probably could have had that many people come forward, since distance learning is very scalable. After 2010 the course continued to be popular and in demand, so we continued to offer "10x10" and have done so to the present time.

This year now marks the tenth year that the course has been offered, and some 1837 people have completed the OHSU offering of 10x10. This includes not only general offerings with AMIA, but those delivered to various partners, including the American College of Emergency Physicians, the Academy of Nutrition and Dietetics, the Mayo Clinic, the Centers for Disease Control and Prevention, the New York State Academy of Family Physicians, and others. The course has also had international appeal as well, with it being translated and then adapted to Latin America by colleagues at Hospital Italiano of Buenos Aires in Argentina as well as being offered in its English version, with some local content and perspective added, in collaboration with Gateway Consulting in Singapore. Additional international offerings have been sponsored by King Saud University of Saudi Arabia and the Israeli Ministry of Health.

All told, the OHSU offering of the 10x10 program has accounted for 76% of the 2406 people who completed various other 10x10 courses. The chart below shows the distribution of the institutions offering English versions of the course.

The 10x10 course has also been good for our informatics educational program at OHSU. As the course is a replication of our introductory course in our graduate program (BMI 510 - Introduction to Biomedical and Health Informatics), those completing the OHSU 10x10 course can optionally take the final exam for BMI 510 and then be eligible for graduate credit at OHSU (if they are eligible for graduate study, i.e., have a bachelor's degree). About half of the people completing the course have taken and passed the final exam, with about half of them (25% of total) enrolling in either our Graduate Certificate or Master of Biomedical Informatics program. Because our graduate program has a "building block" structure, where what is done at lower levels can be applied upward, we have had one individual who even started in the 10x10 course and progressed all the way to obtain a Doctor of Philosophy (PhD) from our program.

As I said at the end of the 2010, the 10x10 program will continue as long as there is interest from individuals who want to take it. Given the continued need for individuals with expertise in informatics, along with rewarding careers for them to pursue in the field, I suspect the course will continue for a long time.

For those who are unfamiliar with the 10x10 course, it is a repackaging of the introductory course in the OHSU Biomedical Informatics Graduate Program. This is the course taken by all students who enter the clinical informatics track of the OHSU program and aims to provide a broad overview of the field and its language. The course has no prerequisites, and does not assume any prior knowledge of healthcare, computing, or other topics. The course has ten units of material, with the graduate course spread over ten weeks and the 10x10 version decompressed to 14 weeks. The 10x10 course also features an in-person session at the end to bring participants together to interact and present project work. (The in-person session is optional for those who might have a hardship in traveling to it.)

The AMIA 10x10 program was launched in 2005 when AMIA wanted to explore online educational offerings. When the cost for development of new materials was found to be prohibitive, I presented a proposal to the AMIA Board of Directors for adapting the introductory online course I had been teaching at Oregon Health & Science University (OHSU) since 1999. Since then-President of AMIA Dr. Charles Safran was calling for one physician and one nurse in each of the 6000 US hospitals to be trained in informatics, I proposed the name 10x10, standing for "10,000 trained by 2010." We all agreed that the course would be mutually non-exclusive, i.e., other universities could offer 10x10 courses while OHSU could continue to employ the course content in other venues.

The OHSU course has, however, been the flagship course of the 10x10 program, and by the end of 2010, a total of 999 had completed it. We did not reach anywhere near that vaunted number of 10,000 by 2010, although probably could have had that many people come forward, since distance learning is very scalable. After 2010 the course continued to be popular and in demand, so we continued to offer "10x10" and have done so to the present time.

This year now marks the tenth year that the course has been offered, and some 1837 people have completed the OHSU offering of 10x10. This includes not only general offerings with AMIA, but those delivered to various partners, including the American College of Emergency Physicians, the Academy of Nutrition and Dietetics, the Mayo Clinic, the Centers for Disease Control and Prevention, the New York State Academy of Family Physicians, and others. The course has also had international appeal as well, with it being translated and then adapted to Latin America by colleagues at Hospital Italiano of Buenos Aires in Argentina as well as being offered in its English version, with some local content and perspective added, in collaboration with Gateway Consulting in Singapore. Additional international offerings have been sponsored by King Saud University of Saudi Arabia and the Israeli Ministry of Health.

All told, the OHSU offering of the 10x10 program has accounted for 76% of the 2406 people who completed various other 10x10 courses. The chart below shows the distribution of the institutions offering English versions of the course.

The 10x10 course has also been good for our informatics educational program at OHSU. As the course is a replication of our introductory course in our graduate program (BMI 510 - Introduction to Biomedical and Health Informatics), those completing the OHSU 10x10 course can optionally take the final exam for BMI 510 and then be eligible for graduate credit at OHSU (if they are eligible for graduate study, i.e., have a bachelor's degree). About half of the people completing the course have taken and passed the final exam, with about half of them (25% of total) enrolling in either our Graduate Certificate or Master of Biomedical Informatics program. Because our graduate program has a "building block" structure, where what is done at lower levels can be applied upward, we have had one individual who even started in the 10x10 course and progressed all the way to obtain a Doctor of Philosophy (PhD) from our program.

As I said at the end of the 2010, the 10x10 program will continue as long as there is interest from individuals who want to take it. Given the continued need for individuals with expertise in informatics, along with rewarding careers for them to pursue in the field, I suspect the course will continue for a long time.

Friday, November 21, 2014

The Year in Review of Biomedical and Health Informatics - 2014

At this year's American Medical Informatics Association (AMIA) 2014 Annual Symposium, I was honored to be asked, along with fellow Oregon Health & Science University (OHSU) faculty member Dr. Joan Ash, to deliver one of the annual Year in Review sessions.

This session was first delivered in 2006 by Dr. Dan Masys, who presented an annual review of the past year's research publications and major events each year. Over time, parts of the annual review were broken off and focused on specific topics. The first of these were the annual reviews in translational bioinformatics (Dr. Russ Altman) and clinical research informatics (Dr. Peter Embi, an OHSU alumnus), presented at the annual AMIA Joint Summits on Translational Science. This year additional topics were peeled off, such as Informatics in the Media Year in Review (Dr. Danny Sands) as well as Public and Global Health Informatics Year in Review (Dr. Brian Dixon, Dr. Jamie Pina, Dr. Janise Richards, Dr. Hadi Kharrazi, and OHSU alumnus Dr. Anne Turner).

This pretty much left clinical informatics as the major topic for Joan and I to cover. However, I had also noted that this separating out of specific aspects of informatics left no one covering the fundamentals of informatics, i.e., topics underlying and germane to all aspects of informatics. We also noted that qualitative and mixed methods research had also been historically underrepresented in these annual reviews. Therefore, Joan and I set the scope of our Year in Review session to clinical informatics and foundations of biomedical and health informatics. For research that was evaluative, Joan would cover qualitative and mixed methods studies, while I would cover studies using predominantly quantitative methods studies.

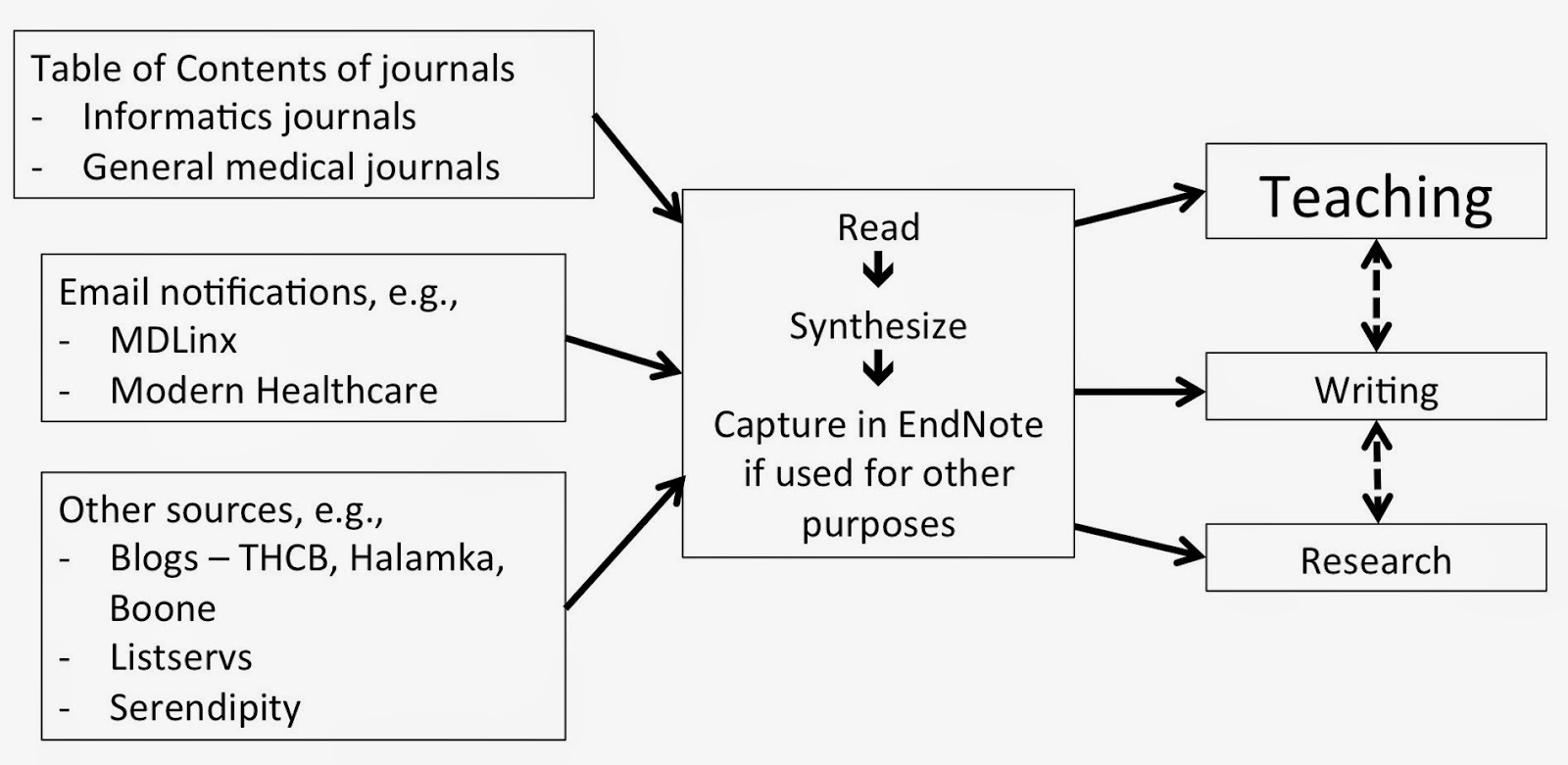

We also believed that while Dan's methods for gathering publications was sound, different approaches worked better for us. For myself in particular, I decided to plug the annual review process into my existing workflow of uncovering important science and events in the field, which I spend a good amount of time doing in order to keep my introductory (10x10 and OHSU) course up to date. I comprehensively scan the literature as well as the news on a continuous basis to keep my teaching materials (and knowledge!) up to date. I actually created a slide in the presentation to show my normal workflow "methods," which informed my review and is shown below.

Our first annual review was presented at the AMIA 2014 Annual Symposium on November 18, 2014. Continuing Dan's tradition, we created a Web page that has a description of our goals and methods, a link to our slides, and all of the articles cited in our presentation. We also kept the traditional time frame for the "year" in review, which was from October 1, 2013 to September 30, 2014. One additional feature of the session that we added was to offer up the last 15 minutes for attendees to make their own nominations for publications or events to be included.

Joan and I were pleased with how the session went, and we were gratified by the positive response from attendees. We are hopeful to be invited back to present the session again next year!

This session was first delivered in 2006 by Dr. Dan Masys, who presented an annual review of the past year's research publications and major events each year. Over time, parts of the annual review were broken off and focused on specific topics. The first of these were the annual reviews in translational bioinformatics (Dr. Russ Altman) and clinical research informatics (Dr. Peter Embi, an OHSU alumnus), presented at the annual AMIA Joint Summits on Translational Science. This year additional topics were peeled off, such as Informatics in the Media Year in Review (Dr. Danny Sands) as well as Public and Global Health Informatics Year in Review (Dr. Brian Dixon, Dr. Jamie Pina, Dr. Janise Richards, Dr. Hadi Kharrazi, and OHSU alumnus Dr. Anne Turner).

This pretty much left clinical informatics as the major topic for Joan and I to cover. However, I had also noted that this separating out of specific aspects of informatics left no one covering the fundamentals of informatics, i.e., topics underlying and germane to all aspects of informatics. We also noted that qualitative and mixed methods research had also been historically underrepresented in these annual reviews. Therefore, Joan and I set the scope of our Year in Review session to clinical informatics and foundations of biomedical and health informatics. For research that was evaluative, Joan would cover qualitative and mixed methods studies, while I would cover studies using predominantly quantitative methods studies.

We also believed that while Dan's methods for gathering publications was sound, different approaches worked better for us. For myself in particular, I decided to plug the annual review process into my existing workflow of uncovering important science and events in the field, which I spend a good amount of time doing in order to keep my introductory (10x10 and OHSU) course up to date. I comprehensively scan the literature as well as the news on a continuous basis to keep my teaching materials (and knowledge!) up to date. I actually created a slide in the presentation to show my normal workflow "methods," which informed my review and is shown below.

Our first annual review was presented at the AMIA 2014 Annual Symposium on November 18, 2014. Continuing Dan's tradition, we created a Web page that has a description of our goals and methods, a link to our slides, and all of the articles cited in our presentation. We also kept the traditional time frame for the "year" in review, which was from October 1, 2013 to September 30, 2014. One additional feature of the session that we added was to offer up the last 15 minutes for attendees to make their own nominations for publications or events to be included.

Joan and I were pleased with how the session went, and we were gratified by the positive response from attendees. We are hopeful to be invited back to present the session again next year!

Saturday, November 15, 2014

Continued Concerns for Building Capacity for the Clinical Informatics Subspecialty - 2014 Update

The first couple years of the clinical informatics subspecialty have been a great success. Last year, about 450 physicians became board-certified after the first certification exam, with many aided by the American Medical Informatics Association (AMIA) Clinical Informatics Board Review Course (CIBRC) that I directed. This year, another cohort took the exam, with many helped by the CIBRC course again. In addition this year, the Accreditation Council for Graduate Medical Education (ACGME) released its initial accreditation guidelines, and four programs (including ours at Oregon Health & Science University [OHSU]) became accredited, with a number of other programs in the process of applying.

Despite these initial positive outcomes, I and others still have many concerns for how we will build appropriate capacity in the new subspecialty. In particular, many of us are concerned that the number of newly certified subspecialists will slow to a trickle after 2018, once the "grandfathering" pathway is no longer available and the only route to certification will be through a two-year, on-site, full-time clinical fellowship. Indeed, the singular bit of advice I give to any physician who is currently "practicing" clinical informatics is to do whatever they can to get certified prior to 2018. It will be much more difficult to become certified after that, since the only pathway will be an ACGME-accredited fellowship.

I previously raised concerns about these challenges in postings last year and the year before, and this one represents an update leading into the annual AMIA Symposium. Colleague Chris Longhurst, whose fellowship program was the first to achieve accreditation, has expressed similar concerns in interviews by CMIO Magazine and HISTalk.

Looking forward, I see four major problems for the subspecialty. I will address each of these and then (since I am a solutions-oriented person) propose what I believe would be a better approach to the subspecialty.

The subspecialty excludes many physicians who do not have a primary specialty

When the AMIA leadership starting development a proposal for professional recognition of physicians in clinical informatics around 2006, they were advised that creating a new primary specialty would be a lot more difficult to sell politically and instead to advised to propose a new subspecialty. This would be unique as a subspecialty of all medical specialties. I am sure that advice was correct, but we have unfortunately excluded those who never obtained a primary clinical specialty or whose specialty certification has lapsed. These individuals can still be highly capable informaticians, and in fact many are. The alternate AMIA Advanced Interprofessional Informatics Certification being developed may serve these physicians, but it would be much better as a profession to have all physicians under a single certification.

The clinical fellowship model will exclude from training the many physicians who gravitate into informatics well after their initial training

The majority of physicians who work in clinical informatics did not start their careers in the field. Many gravitated into the field long after they completed their initial medical training, took a job, and established geographic roots and families. The distance learning graduate programs offered by OHSU and other universities have been a boon to these individuals, as they can train in informatics while keeping their current jobs and not needing to uproot their families. Many of these individuals have great experience, and many passed the initial board exam. They are clearly capable.

After 2018, the "grandfathering" pathway will no longer be an option, and the only way to achieve board certification will be via a full-time two-year fellowship. It is interesting to note the recent advice I heard expressed by Dr. Robert Wah, President of the American Medical Association. He noted that many physicians have moved beyond direct clinical care to have an impact in medicine in other ways. But he advised that every physician should establish their clinical career first and then move on to other pursuits. This too is at odds with the clinical fellowship model that almost by necessity must come during one's primary medical training.

In a similar vein, a number of colleagues who are subspecialists in other fields of medicine express concern that a clinical informatics subspecialty fellowship would add an additional two years of training on to the already lengthy training required of most highly specialized physicians. As much as I am an advocate of formal informatics training, I also recognize, and would even encourage, such training being integrated with other clinical training, especially in subspecialties.

The clinical fellowship model also is not the most appropriate way to train clinical informaticians

Even for those who are able to complete clinical informatics fellowships, the classic clinical fellowship training model is problematic, as those of us applying to ACGME have learned. I likened this process a few months ago to fitting square pegs into round holes.

Clinical medicine is very well suited to episodic learning and hence rotations. A patient comes in, and their current presentation is a nice segue into learning about the diseases they have, the treatments they are being given, and the course of their disease(s). Even patients being followed longitudinally in a continuity clinic have episodes of care with the healthcare system that provide good learning.

But informatics is a different kind of topic. Informatics is not an activity that takes place in episodes. You can't really learn from episodic exposure to it. Good informatics projects, such as a clinical decision support implementation or a quality improvement initiative, take place over time. In fact, learning is compromised when you jump in and/or leave in the middle. Informatics projects are also carried out by teams of people with diverse skills with whom the informatician must work. I would assert that better learning takes place when the informatics trainee encounters specific informatics issues (standards, security, change management, etc.) in the context of long-term projects.

There are other concerns that have arisen about various aspects of the ACGME accreditation progress. One program was declined accreditation because a program director was not in the same primary specialty as the Residency Review Committee (RRC), despite the fact that clinical informatics is supposed to span all specialties. ACGME also requires any fellowship program, no matter how small, to have 2.0 FTE of combined director and faculty time. This may make sense in a clinical setting where faculty are simultaneously engaged in care of the same patient, but does not fit well when a trainee is working on single aspects of a larger project. Another ACGME requirement is for all faculty who teach to be named, and for those who are named to be board-certified clinical informaticians. This again does not make sense in the context of informatics being an activity with participants from many disciplines outside of medicine, some even outside of healthcare. Finally, ACGME requires fellows to be paid. This is easier to do when fellows are actively involved in the clinical operations of the hospital. Even if these trainees cannot bill, they do make it easier for attending physicians and hospitals to bill.

The funding model for fellowships creates challenges for their sustainability

A final challenge for clinical informatics fellowships is their funding and sustainability. Most subspecialty training in the US is funded by academic hospitals, and part of the "grand bargain" of such training is that clinical trainees provide inexpensive labor, which "extends" the ability of their teachers to provide care. The various clinical units have incentive to do this because it increases the ability of the units to provide and bill for services. Clinical informatics is different in that fellows will be unlikely to provide direct capacity benefit to academic clinical informatics departments. Our department at OHSU, for example, does not have operational clinical IT responsibilities.

Furthermore, these fellows will be doing their clinical practice in their primary specialties, and not their clinical informatics subspecialty. The primary specialties will include the full gamut of medical specialties such as internal medicine, radiology, pathology, and others. Even if fellows will be able to bill, it will be challenging within organizations for units to divide up the revenues.

Solutions

In last year's post I proposed a solution addressing last year's description of these problems, and what follows is an updated version. There are approaches that could be rigorous enough to ensure an equally if not more robust educational and training experience than the proposed fellowship model. It would no doubt test the boundaries of a tradition-bound organization like ACGME but could also show innovation reflective (and indeed required) of modern medical training generally.

A first solution is to provide a pathway for any physician to become certified in clinical informatics, whether having a primary board certification or not. Informatics as a subspecialty of any medical specialty is a contortion. I do not buy that one cannot be a successful clinical informatician without having a primary board certification. I and likely everyone else in the field know of too many counter-examples to that.

Moving on to specifics of training, last year I noted that there should be three basic activities of clinical informatics subspecialty trainees:

Next, how would trainees get their practical hands-on project work? Again, many informatics programs, certainly ours, have developed mechanisms by which students can do internships or practicums in remote location through a combination of affiliation agreements, local mentoring, and remote supervision. While our program currently has students performing 3-6 months at a time of these, I see no reason why the practical experience could not be expanded to a year or longer. Strict guidelines for experience and both local and remote mentoring could be put in place to insure quality.

Lastly, what about clinical practice? As noted above, I disagree that this should even be a requirement. But if it were, requiring a trainee to perform a certain volume of clinical practice, while adhering to all appropriate requirements for licensure and maintenance of certification, should be more than adequate to insure practice in their primary specialty. Many informatics distance learning students are already maintaining their clinical practices to maintain their livelihood. Making clinical practice explicit, instead of as something requiring supervision, will also allow training to be more financially viable for the fellow. Any costs of tuition and practical work could easily be offset by clinical practice revenue.

There would need to be some sort of national infrastructure to set standards and monitor progress of clinical informatics trainees. There are any number of organizations that could perform this task, such as AMIA, and it could perhaps be a requirement of accreditation. Indeed, ACGME and the larger medical education community may learn from alternative approaches like this for training in other specialties. One major national concern these days is that number of residency positions for medical school graduates is not keeping up with the increases of medical school enrollment or, for that matter, the national need for physicians. It is possible that alternative approaches like this could expand the capacity of all medical specialties and subspecialties, and not just clinical informatics.

Despite these initial positive outcomes, I and others still have many concerns for how we will build appropriate capacity in the new subspecialty. In particular, many of us are concerned that the number of newly certified subspecialists will slow to a trickle after 2018, once the "grandfathering" pathway is no longer available and the only route to certification will be through a two-year, on-site, full-time clinical fellowship. Indeed, the singular bit of advice I give to any physician who is currently "practicing" clinical informatics is to do whatever they can to get certified prior to 2018. It will be much more difficult to become certified after that, since the only pathway will be an ACGME-accredited fellowship.

I previously raised concerns about these challenges in postings last year and the year before, and this one represents an update leading into the annual AMIA Symposium. Colleague Chris Longhurst, whose fellowship program was the first to achieve accreditation, has expressed similar concerns in interviews by CMIO Magazine and HISTalk.

Looking forward, I see four major problems for the subspecialty. I will address each of these and then (since I am a solutions-oriented person) propose what I believe would be a better approach to the subspecialty.

The subspecialty excludes many physicians who do not have a primary specialty

When the AMIA leadership starting development a proposal for professional recognition of physicians in clinical informatics around 2006, they were advised that creating a new primary specialty would be a lot more difficult to sell politically and instead to advised to propose a new subspecialty. This would be unique as a subspecialty of all medical specialties. I am sure that advice was correct, but we have unfortunately excluded those who never obtained a primary clinical specialty or whose specialty certification has lapsed. These individuals can still be highly capable informaticians, and in fact many are. The alternate AMIA Advanced Interprofessional Informatics Certification being developed may serve these physicians, but it would be much better as a profession to have all physicians under a single certification.

The clinical fellowship model will exclude from training the many physicians who gravitate into informatics well after their initial training

The majority of physicians who work in clinical informatics did not start their careers in the field. Many gravitated into the field long after they completed their initial medical training, took a job, and established geographic roots and families. The distance learning graduate programs offered by OHSU and other universities have been a boon to these individuals, as they can train in informatics while keeping their current jobs and not needing to uproot their families. Many of these individuals have great experience, and many passed the initial board exam. They are clearly capable.

After 2018, the "grandfathering" pathway will no longer be an option, and the only way to achieve board certification will be via a full-time two-year fellowship. It is interesting to note the recent advice I heard expressed by Dr. Robert Wah, President of the American Medical Association. He noted that many physicians have moved beyond direct clinical care to have an impact in medicine in other ways. But he advised that every physician should establish their clinical career first and then move on to other pursuits. This too is at odds with the clinical fellowship model that almost by necessity must come during one's primary medical training.

In a similar vein, a number of colleagues who are subspecialists in other fields of medicine express concern that a clinical informatics subspecialty fellowship would add an additional two years of training on to the already lengthy training required of most highly specialized physicians. As much as I am an advocate of formal informatics training, I also recognize, and would even encourage, such training being integrated with other clinical training, especially in subspecialties.

The clinical fellowship model also is not the most appropriate way to train clinical informaticians

Even for those who are able to complete clinical informatics fellowships, the classic clinical fellowship training model is problematic, as those of us applying to ACGME have learned. I likened this process a few months ago to fitting square pegs into round holes.

Clinical medicine is very well suited to episodic learning and hence rotations. A patient comes in, and their current presentation is a nice segue into learning about the diseases they have, the treatments they are being given, and the course of their disease(s). Even patients being followed longitudinally in a continuity clinic have episodes of care with the healthcare system that provide good learning.

But informatics is a different kind of topic. Informatics is not an activity that takes place in episodes. You can't really learn from episodic exposure to it. Good informatics projects, such as a clinical decision support implementation or a quality improvement initiative, take place over time. In fact, learning is compromised when you jump in and/or leave in the middle. Informatics projects are also carried out by teams of people with diverse skills with whom the informatician must work. I would assert that better learning takes place when the informatics trainee encounters specific informatics issues (standards, security, change management, etc.) in the context of long-term projects.

There are other concerns that have arisen about various aspects of the ACGME accreditation progress. One program was declined accreditation because a program director was not in the same primary specialty as the Residency Review Committee (RRC), despite the fact that clinical informatics is supposed to span all specialties. ACGME also requires any fellowship program, no matter how small, to have 2.0 FTE of combined director and faculty time. This may make sense in a clinical setting where faculty are simultaneously engaged in care of the same patient, but does not fit well when a trainee is working on single aspects of a larger project. Another ACGME requirement is for all faculty who teach to be named, and for those who are named to be board-certified clinical informaticians. This again does not make sense in the context of informatics being an activity with participants from many disciplines outside of medicine, some even outside of healthcare. Finally, ACGME requires fellows to be paid. This is easier to do when fellows are actively involved in the clinical operations of the hospital. Even if these trainees cannot bill, they do make it easier for attending physicians and hospitals to bill.

The funding model for fellowships creates challenges for their sustainability

A final challenge for clinical informatics fellowships is their funding and sustainability. Most subspecialty training in the US is funded by academic hospitals, and part of the "grand bargain" of such training is that clinical trainees provide inexpensive labor, which "extends" the ability of their teachers to provide care. The various clinical units have incentive to do this because it increases the ability of the units to provide and bill for services. Clinical informatics is different in that fellows will be unlikely to provide direct capacity benefit to academic clinical informatics departments. Our department at OHSU, for example, does not have operational clinical IT responsibilities.

Furthermore, these fellows will be doing their clinical practice in their primary specialties, and not their clinical informatics subspecialty. The primary specialties will include the full gamut of medical specialties such as internal medicine, radiology, pathology, and others. Even if fellows will be able to bill, it will be challenging within organizations for units to divide up the revenues.

Solutions

In last year's post I proposed a solution addressing last year's description of these problems, and what follows is an updated version. There are approaches that could be rigorous enough to ensure an equally if not more robust educational and training experience than the proposed fellowship model. It would no doubt test the boundaries of a tradition-bound organization like ACGME but could also show innovation reflective (and indeed required) of modern medical training generally.

A first solution is to provide a pathway for any physician to become certified in clinical informatics, whether having a primary board certification or not. Informatics as a subspecialty of any medical specialty is a contortion. I do not buy that one cannot be a successful clinical informatician without having a primary board certification. I and likely everyone else in the field know of too many counter-examples to that.

Moving on to specifics of training, last year I noted that there should be three basic activities of clinical informatics subspecialty trainees:

- Clinical informatics education to master the core knowledge of the field

- Clinical informatics project work to gain skills and practical experience

- Clinical practice to maintain their skills in their primary medical specialty

Next, how would trainees get their practical hands-on project work? Again, many informatics programs, certainly ours, have developed mechanisms by which students can do internships or practicums in remote location through a combination of affiliation agreements, local mentoring, and remote supervision. While our program currently has students performing 3-6 months at a time of these, I see no reason why the practical experience could not be expanded to a year or longer. Strict guidelines for experience and both local and remote mentoring could be put in place to insure quality.

Lastly, what about clinical practice? As noted above, I disagree that this should even be a requirement. But if it were, requiring a trainee to perform a certain volume of clinical practice, while adhering to all appropriate requirements for licensure and maintenance of certification, should be more than adequate to insure practice in their primary specialty. Many informatics distance learning students are already maintaining their clinical practices to maintain their livelihood. Making clinical practice explicit, instead of as something requiring supervision, will also allow training to be more financially viable for the fellow. Any costs of tuition and practical work could easily be offset by clinical practice revenue.

There would need to be some sort of national infrastructure to set standards and monitor progress of clinical informatics trainees. There are any number of organizations that could perform this task, such as AMIA, and it could perhaps be a requirement of accreditation. Indeed, ACGME and the larger medical education community may learn from alternative approaches like this for training in other specialties. One major national concern these days is that number of residency positions for medical school graduates is not keeping up with the increases of medical school enrollment or, for that matter, the national need for physicians. It is possible that alternative approaches like this could expand the capacity of all medical specialties and subspecialties, and not just clinical informatics.

Wednesday, November 12, 2014

Ebola is a Reason for Implementing ICD-10, or is it? What is the Role of Coding in the Modern EHR Era?

The recent Ebola outbreak has been used to justify or advocate many things. Among them is further advocacy for the transition to the ICD-10 coding system. However, when discussing Ebola and coding, it also gives us a chance to pause and address some larger issues around coding in the modern era of the electronic health record (EHR).

Coding of medical records is a requirement for billing, i.e., diagnosis codes must be included on a claim to obtain reimbursement for services from an insurer, whether a private insurance company or the government. The coding system currently used in the United States is ICD-9-CM, but its replacement with ICD-10-CM has been mandated, although the deadline has been postponed three times over the last four years. This coding also potentially creates a vast source of data for research, surveillance, and other purposes. Indeed, there is a whole body of research based on such "claims data," with one of the arguments for its use being that what this data lacks in depth or completeness is made up for by its volume [1].

ICD-9-CM has many limitations as a coding system. Probably its biggest limitation is that many codes cover a whole swath of diseases. In addition, its "not otherwise specified" may change over time when one of the components of not being otherwise specified becomes specified.

What does this have to do with Ebola? In ICD-9-CM, Ebola is one of many diagnoses covered by the code, 078.89 - Other specified diseases due to viruses. There are about 35 viruses that map to this code, including some common ones such as coronavirus and rotavirus. ICD-10-CM, on the other hand, has a specific code, A98.4 - Ebola virus disease.

Does this provide justification for the move to ICD-10-CM? ICD-10-CM is clearly more detailed and granular, and in fact may be excessively granular. Another concern about implementing ICD-10-CM is the cost to physicians and hospitals, which are mostly unknown although estimates vary widely [2, 3]. There is no question that ICD-9-CM falls short and that ICD-10-CM does have a specific code in the case of Ebola. But is this itself a reason justifying the move to ICD-10-CM, or are there other ways to determine from a medical record whether a diagnosis has been made?

When controversial questions arise, I always find it useful to step back and ask some questions, such as what we are trying to accomplish and whether it is the best way for doing so? There is actually a body of scientific literature that has assessed the consistency and value of coding medical records. One systematic review of United Kingdom coding studies found that coding accuracy in the UK varied widely, with a mean accuracy of 80.3% for diagnoses and 84.2% for procedures [4]. The range of accuracy, however, was from 50.5-97.8%. Another systematic review looked at heart failure diagnoses in Canadian hospitals, finding both ICD-9 and ICD-10 coding to vary widely in sensitivity of actual diagnosis (29-89%) and kappa scores of inter-assigner agreement (0.39-0.84) [5]. A US-based systematic review of identifying heart failure with diagnostic coding data found positive predictive value to be reasonably high (87-100%) but sensitivity to be lower [6]. Studies of diagnosis codes for hypertension [7] and obesity [8] found low sensitivity but higher specificity.

While these studies show that coding data is imperfect, there was a time when the predominance of paper medical records meant there was no alternative to data that could be analyzed. However, we are now in an era of widespread EHR adoption, which means that there are other sources of data to document diagnoses, testing, and treatments. In the case of Ebola, we have many other possible sources of data, as described by the Centers for Disease Control.

While there is certainly no evidence that our entire medical record coding enterprise should be immediately abandoned, there is definitely a case to reassess its necessity and value in the modern EHR era. This is especially the case when we are using EHR data for so many other purposes [9]. As with many questions, dispassionate science and analysis is the best approach to providing us with answers.

References

1. Ferver, K, Burton, B, et al. (2009). The use of claims data in healthcare research. The Open Public Health Journal. 2: 11-24.

2. Hartley, C and Nachimson, S (2014). The Cost of Implementing ICD‐10 for Physician Practices – Updating the 2008 Nachimson Advisors Study. Baltimore, MD, Nachimson Advisors, LLC. http://www.ama-assn.org/resources/doc/washington/icd-10-costs-for-physician-practices-study.pdf.

3. Kravis, TC, Belley, S, et al. (2014). Cost of converting small physician offices to ICD-10 much lower than previously reported. Journal of AHIMA, http://journal.ahima.org/wp-content/uploads/Week-3_PDFpost.FINAL-Estimating-the-Cost-of-Conversion-to-ICD-10_-Nov-12.pdf.

4. Burns, EM, Rigby, E, et al. (2012). Systematic review of discharge coding accuracy. Journal of Public Health. 34: 138-148.

5. Quach, S, Blais, C, et al. (2010). Administrative data have high variation in validity for recording heart failure. Canadian Journal of Cardiology. 26: e306-e312.

6. Saczynski, JS, Andrade, SE, et al. (2012). A systematic review of validated methods for identifying heart failure using administrative data. Pharmacoepidemiology and Drug Safety. 21: 129-140.

7. Tessier-Sherman, B, Galusha, D, et al. (2013). Further validation that claims data are a useful tool for epidemiologic research on hypertension. BMC Public Health. 13: 51. http://www.biomedcentral.com/1471-2458/13/51.

8. Lloyd, JT, Blackwell, SA, et al. (2014). Validity of a claims-based diagnosis of obesity among medicare beneficiaries. Evaluation and the Health Professions. Epub ahead of print.

9. Hersh, WR (2014). Healthcare Data Analytics. In Health Informatics: Practical Guide for Healthcare and Information Technology Professionals, Sixth Edition. R. Hoyt and A. Yoshihashi. Pensacola, FL, Lulu.com: 62-75.

Coding of medical records is a requirement for billing, i.e., diagnosis codes must be included on a claim to obtain reimbursement for services from an insurer, whether a private insurance company or the government. The coding system currently used in the United States is ICD-9-CM, but its replacement with ICD-10-CM has been mandated, although the deadline has been postponed three times over the last four years. This coding also potentially creates a vast source of data for research, surveillance, and other purposes. Indeed, there is a whole body of research based on such "claims data," with one of the arguments for its use being that what this data lacks in depth or completeness is made up for by its volume [1].

ICD-9-CM has many limitations as a coding system. Probably its biggest limitation is that many codes cover a whole swath of diseases. In addition, its "not otherwise specified" may change over time when one of the components of not being otherwise specified becomes specified.

What does this have to do with Ebola? In ICD-9-CM, Ebola is one of many diagnoses covered by the code, 078.89 - Other specified diseases due to viruses. There are about 35 viruses that map to this code, including some common ones such as coronavirus and rotavirus. ICD-10-CM, on the other hand, has a specific code, A98.4 - Ebola virus disease.

Does this provide justification for the move to ICD-10-CM? ICD-10-CM is clearly more detailed and granular, and in fact may be excessively granular. Another concern about implementing ICD-10-CM is the cost to physicians and hospitals, which are mostly unknown although estimates vary widely [2, 3]. There is no question that ICD-9-CM falls short and that ICD-10-CM does have a specific code in the case of Ebola. But is this itself a reason justifying the move to ICD-10-CM, or are there other ways to determine from a medical record whether a diagnosis has been made?

When controversial questions arise, I always find it useful to step back and ask some questions, such as what we are trying to accomplish and whether it is the best way for doing so? There is actually a body of scientific literature that has assessed the consistency and value of coding medical records. One systematic review of United Kingdom coding studies found that coding accuracy in the UK varied widely, with a mean accuracy of 80.3% for diagnoses and 84.2% for procedures [4]. The range of accuracy, however, was from 50.5-97.8%. Another systematic review looked at heart failure diagnoses in Canadian hospitals, finding both ICD-9 and ICD-10 coding to vary widely in sensitivity of actual diagnosis (29-89%) and kappa scores of inter-assigner agreement (0.39-0.84) [5]. A US-based systematic review of identifying heart failure with diagnostic coding data found positive predictive value to be reasonably high (87-100%) but sensitivity to be lower [6]. Studies of diagnosis codes for hypertension [7] and obesity [8] found low sensitivity but higher specificity.

While these studies show that coding data is imperfect, there was a time when the predominance of paper medical records meant there was no alternative to data that could be analyzed. However, we are now in an era of widespread EHR adoption, which means that there are other sources of data to document diagnoses, testing, and treatments. In the case of Ebola, we have many other possible sources of data, as described by the Centers for Disease Control.

While there is certainly no evidence that our entire medical record coding enterprise should be immediately abandoned, there is definitely a case to reassess its necessity and value in the modern EHR era. This is especially the case when we are using EHR data for so many other purposes [9]. As with many questions, dispassionate science and analysis is the best approach to providing us with answers.

References

1. Ferver, K, Burton, B, et al. (2009). The use of claims data in healthcare research. The Open Public Health Journal. 2: 11-24.

2. Hartley, C and Nachimson, S (2014). The Cost of Implementing ICD‐10 for Physician Practices – Updating the 2008 Nachimson Advisors Study. Baltimore, MD, Nachimson Advisors, LLC. http://www.ama-assn.org/resources/doc/washington/icd-10-costs-for-physician-practices-study.pdf.

3. Kravis, TC, Belley, S, et al. (2014). Cost of converting small physician offices to ICD-10 much lower than previously reported. Journal of AHIMA, http://journal.ahima.org/wp-content/uploads/Week-3_PDFpost.FINAL-Estimating-the-Cost-of-Conversion-to-ICD-10_-Nov-12.pdf.

4. Burns, EM, Rigby, E, et al. (2012). Systematic review of discharge coding accuracy. Journal of Public Health. 34: 138-148.

5. Quach, S, Blais, C, et al. (2010). Administrative data have high variation in validity for recording heart failure. Canadian Journal of Cardiology. 26: e306-e312.

6. Saczynski, JS, Andrade, SE, et al. (2012). A systematic review of validated methods for identifying heart failure using administrative data. Pharmacoepidemiology and Drug Safety. 21: 129-140.

7. Tessier-Sherman, B, Galusha, D, et al. (2013). Further validation that claims data are a useful tool for epidemiologic research on hypertension. BMC Public Health. 13: 51. http://www.biomedcentral.com/1471-2458/13/51.

8. Lloyd, JT, Blackwell, SA, et al. (2014). Validity of a claims-based diagnosis of obesity among medicare beneficiaries. Evaluation and the Health Professions. Epub ahead of print.

9. Hersh, WR (2014). Healthcare Data Analytics. In Health Informatics: Practical Guide for Healthcare and Information Technology Professionals, Sixth Edition. R. Hoyt and A. Yoshihashi. Pensacola, FL, Lulu.com: 62-75.

Wednesday, November 5, 2014

Two Recent Research Briefs Reiterate the Need for Clinical Decision Support

One of the seminal papers in informatics was published in 1978, when Octo Barnett and colleagues demonstrated that while computer-based feedback could positively impact physician decision-making, that impact went away when the feedback was removed. This has always been a rationale for clinical decision support (CDS), which helps clinicians because it reminds them to do the right thing, and that does not impart learning.

Two recent research briefs demonstrate how challenging is the task of getting physicians to be appropriate stewards of antibiotics and have implications for CDS. Antibiotics were one of the miracles of 20th century medicine, leading to substantial ability to fight infection. They are still an important armamentarium of medicine, but their value is threatened by growing resistance of organisms [2].

One research brief finds that the likelihood of antibiotic prescribing becomes higher as day goes on, which the researchers call "decision fatigue" [3]. Another brief shows that implementation of a physician audit and feedback program resulted in reducing inappropriate antibiotic prescribing, but that removal of the program resulted in a return toward baseline prescribing habits [4]. This finding has been found in other similar programs [5].

Practicing medicine is a complex task. Although physicians have always been assumed to maintain the entire knowledge base in their heads, decades of informatics-related research has shown otherwise. Of course, the way we implement CDS is imperfect, often providing advice that physicians do not need [6]. A big challenge going forward will be to optimize the signal vs. noise and determine the best ways to deliver that signal.

References

1. Barnett, GO, Winickoff, R, et al. (1978). Quality assurance through automated monitoring and concurrent feedback using a computer-based medical information system. Medical Care. 16: 962-970.

2. Anonymous (2013). Antibiotic Resistance Threats in the United States, 2013. Atlanta, GA, Centers for Disease Control and Prevention.

3. Linder, JA, Doctor, JN, et al. (2014). Time of day and the decision to prescribe antibiotics. JAMA Internal Medicine. Epub ahead of print.

4. Gerber, JS, Prasad, PA, et al. (2014). Durability of benefits of an outpatient antimicrobial stewardship intervention after discontinuation of audit and feedback. Journal of the American Medical Association. Epub ahead of print.

5. Arnold, SR and Straus, SE (2005). Interventions to improve antibiotic prescribing practices in ambulatory care. Cochrane Database of Systematic Reviews. 2005(4): CD003539.

6. Nanji, KC, Slight, SP, et al. (2014). Overrides of medication-related clinical decision support alerts in outpatients. Journal of the American Medical Informatics Association. 21: 487-491.

Two recent research briefs demonstrate how challenging is the task of getting physicians to be appropriate stewards of antibiotics and have implications for CDS. Antibiotics were one of the miracles of 20th century medicine, leading to substantial ability to fight infection. They are still an important armamentarium of medicine, but their value is threatened by growing resistance of organisms [2].

One research brief finds that the likelihood of antibiotic prescribing becomes higher as day goes on, which the researchers call "decision fatigue" [3]. Another brief shows that implementation of a physician audit and feedback program resulted in reducing inappropriate antibiotic prescribing, but that removal of the program resulted in a return toward baseline prescribing habits [4]. This finding has been found in other similar programs [5].

Practicing medicine is a complex task. Although physicians have always been assumed to maintain the entire knowledge base in their heads, decades of informatics-related research has shown otherwise. Of course, the way we implement CDS is imperfect, often providing advice that physicians do not need [6]. A big challenge going forward will be to optimize the signal vs. noise and determine the best ways to deliver that signal.

References

1. Barnett, GO, Winickoff, R, et al. (1978). Quality assurance through automated monitoring and concurrent feedback using a computer-based medical information system. Medical Care. 16: 962-970.

2. Anonymous (2013). Antibiotic Resistance Threats in the United States, 2013. Atlanta, GA, Centers for Disease Control and Prevention.

3. Linder, JA, Doctor, JN, et al. (2014). Time of day and the decision to prescribe antibiotics. JAMA Internal Medicine. Epub ahead of print.

4. Gerber, JS, Prasad, PA, et al. (2014). Durability of benefits of an outpatient antimicrobial stewardship intervention after discontinuation of audit and feedback. Journal of the American Medical Association. Epub ahead of print.

5. Arnold, SR and Straus, SE (2005). Interventions to improve antibiotic prescribing practices in ambulatory care. Cochrane Database of Systematic Reviews. 2005(4): CD003539.

6. Nanji, KC, Slight, SP, et al. (2014). Overrides of medication-related clinical decision support alerts in outpatients. Journal of the American Medical Informatics Association. 21: 487-491.

Thursday, October 30, 2014

OHSU Clinical Informatics Fellowship Accredited and Accepting Applications

The Oregon Health & Science University (OHSU) Clinical Informatics Fellowship Program is accepting applications for its inaugural class of fellows to begin in July, 2015. The program was notified by the Accreditation Council for Graduate Medical Education (ACGME) in September, 2014 that it received initial ACGME accreditation. The program is now launching its application process for its initial group of trainees. These fellowships are for physicians who seek to become board-certified in the new subspecialty of clinical informatics. Many graduates will likely obtain employment in the growing number of Chief Medical Information Officer (CMIO) or related positions in healthcare and vendor organizations.

This fellowship will be structured more like a traditional clinical fellowship than the graduate educational program model that our other offerings. Fellows will work through various rotations in different healthcare settings, not only at OHSU Hospital but also the Portland VA Medical Center. They will also take classes in the OHSU Graduate Certificate Program that will provide them the knowledge base of the field and prepare them for the board certification exam at the end of their fellowship. The program Web site describes the curriculum and other activities in the fellowship.

It is important to note that this clinical informatics fellowship is an addition to the suite of informatics educational offerings by OHSU and does not replace any existing programs. OHSU will continue to have its graduate program (Graduate Certificate, two master's degrees, and PhD degree) as well as its other research fellowships, including the flagship program funded by the National Library of Medicine. The student population will continue include not only physicians, but also those from other healthcare professions, information technology, and a wide variety of other fields. Job opportunities across the biomedical and health informatics continue to be strong and well-compensated.

OHSU was the third program in the country to receive accreditation in the country. Several other programs are also in the process of seeking accreditation, and a number of them will be using OHSU distance learning course materials for the didactic portion of their programs. (This summer, the first two fellows in the Stanford Packard Children's Hospital fellowship program took the introductory biomedical informatics course from OHSU.)

As defined by ACGME, clinical informatics is "the subspecialty of all medical specialties that transforms health care by analyzing, designing, implementing, and evaluating information and communication systems to improve patient care, enhance access to care, advance individual and population health outcomes, and strengthen the clinician-patient relationship." The new specialty was launched in 2013, with physicians already working in the field able to sit for the certification exam by meeting prior practice requirements. Starting in 2018, this "grandfathering" pathway will go away, and only those completing an ACGME-accredited fellowship will be board-eligible. Last year, seven OHSU faculty physicians became board-certified in the new clinical informatics subspecialty, including the program director (William Hersh, MD) and two Associate Program Directors (Vishnu Mohan, MD, MBI; Thomas Yackel, MD, MS, MPH).

We look forward to a great group of applicants and the launch of the fellowship next summer. We also look forward to working with colleagues launching similar programs at other institutions as the field of clinical informatics begins to take hold.

This fellowship will be structured more like a traditional clinical fellowship than the graduate educational program model that our other offerings. Fellows will work through various rotations in different healthcare settings, not only at OHSU Hospital but also the Portland VA Medical Center. They will also take classes in the OHSU Graduate Certificate Program that will provide them the knowledge base of the field and prepare them for the board certification exam at the end of their fellowship. The program Web site describes the curriculum and other activities in the fellowship.

It is important to note that this clinical informatics fellowship is an addition to the suite of informatics educational offerings by OHSU and does not replace any existing programs. OHSU will continue to have its graduate program (Graduate Certificate, two master's degrees, and PhD degree) as well as its other research fellowships, including the flagship program funded by the National Library of Medicine. The student population will continue include not only physicians, but also those from other healthcare professions, information technology, and a wide variety of other fields. Job opportunities across the biomedical and health informatics continue to be strong and well-compensated.

OHSU was the third program in the country to receive accreditation in the country. Several other programs are also in the process of seeking accreditation, and a number of them will be using OHSU distance learning course materials for the didactic portion of their programs. (This summer, the first two fellows in the Stanford Packard Children's Hospital fellowship program took the introductory biomedical informatics course from OHSU.)

As defined by ACGME, clinical informatics is "the subspecialty of all medical specialties that transforms health care by analyzing, designing, implementing, and evaluating information and communication systems to improve patient care, enhance access to care, advance individual and population health outcomes, and strengthen the clinician-patient relationship." The new specialty was launched in 2013, with physicians already working in the field able to sit for the certification exam by meeting prior practice requirements. Starting in 2018, this "grandfathering" pathway will go away, and only those completing an ACGME-accredited fellowship will be board-eligible. Last year, seven OHSU faculty physicians became board-certified in the new clinical informatics subspecialty, including the program director (William Hersh, MD) and two Associate Program Directors (Vishnu Mohan, MD, MBI; Thomas Yackel, MD, MS, MPH).

We look forward to a great group of applicants and the launch of the fellowship next summer. We also look forward to working with colleagues launching similar programs at other institutions as the field of clinical informatics begins to take hold.

Tuesday, October 21, 2014

What are Realistic Goals for EHR Interoperability?

Last week, the two major advisory committees of the Office of the National Coordinator for Health IT (ONC) met to hear recommendations from ONC on the critical need to advance electronic health record (EHR) interoperability going forward. The ONC Health IT Policy Committee and the ONC Health IT Standards Committee endorsed a draft roadmap for achieving interoperability over 10 years, with incremental accomplishments at three and six years. The materials from the event are worth perusing.

The ONC has been facing pressure for more action on interoperability. Although great progress has resulted from the HITECH Act in terms of achieving near-universal adoption of EHRs in hospitals (94%) [1] and among three-quarters of physicians [2], the use of health information exchange (HIE), which requires interoperability, is far lower. Recently, about 62% of hospitals report exchanging varying amounts of data with outside organizations [3], with only 38% of physicians exchanging data with outside organizations [4]. A recent update of the annual eHI survey shows there are still considerable technical and financial challenges to HIE organizations that raise questions about their sustainability [5]. The challenges with HIE lagging behind EHR adoption was among the reasons that led ONC to publish a ten-year vision for interoperability in the US healthcare system [6].